NYT

Among journalists, technology breeds fear of obsolescence, corporations

On 24, Jan 2010 | One Comment | In sociology, tactility | By Dave

Another NYT article about technology anxiety, this one by Brad Stone. Some excerpts:

I’ve begun to think that my daughter’s generation will also be utterly unlike those that preceded it.

Well, it’s better to begin to think than to never start. There’s plenty of room for more people to contemplate and write about the future of technology. We are a friendly bunch! Let me be the first to welcome you, Mr. Stone.

But the newest batch of Internet users and cellphone owners will find these geo-intelligent tools to be entirely second nature, and may even come to expect all software and hardware to operate in this way. Here is where corporations can start licking their chops. My daughter and her peers will never be “off the grid.” And they may come to expect that stores will emanate discounts as they walk by them, and that friends can be tracked down anywhere.

I see, so even though technology will lift people out of poverty and make life longer and more enriching, technology is really just a vehicle for capitalist oppression. And like mad, salivating dogs, corporations will lick their chops. Right.

But the children, teenagers and young adults who are passing through this cauldron of technological change will also have a lot in common. They’ll think nothing of sharing the minutiae of their lives online, staying connected to their friends at all times, buying virtual goods, and owning one über-device that does it all. They will believe the Kindle is the same as a book. And they will all think their parents are hopelessly out of touch.

Of all the mind blowing changes that technology will bring to our society, the real thought-provoker is that those crazy young’uns will think a Kindle is the same as a book!

Mr. Stone: elevate your perspective. If you need help, read my blog, and read what I link to. Anticipate the future. Integrate it. Do develop a grounded, holistic understanding of where we’re going as a technological society. Don’t develop sociological theories based on your marvel at incremental steps like the Kindle. It won’t help you see the big picture.

The Gray Ditz discovers augmented reality

On 04, Dec 2009 | No Comments | In interfaces | By Dave

I must speak up on this one. Recently in the New York Times Sunday magazine, Rob Walker wrote a foolish article about augmented reality. The first half deals with introducing augmented reality, the Avatar movie, and the Yelp app. But this is his description of the future of this incredible technology:

Core77, the online design magazine, suggested one amusing possibility earlier this year: fold in facial-recognition technology and you could point your phone at Bob from accounting, whose visage is now “augmented” with the information that he has a gay son and drinks Hoegaarden. More recently, a Swedish company has publicized a prototype app that would in fact augment the image of Bob (or whomever) with information from his social-networking profiles — and they aren’t kidding.

Your silly example wrecks the already floundering article, whose original purpose, I assume, was to inform us about an incoming technology. So how and why did you come up with the idea that Bob would be marked with a note saying his son is gay? It implies that augmented reality entails a violation of privacy, which it does not.

How about: “Fold in facial-recognition technology and you could point your phone at Bob from accounting and see him enwrapped in a digital ecosystem—video tattoos bloom across his body like Ray Bradbury’s Illustrated Man, while around his head swirls a halo of tweets, emotions, and memories. It may all be virtual, but the way you see him is augmented in a very real way.”

But instead of offering a creative example to show that the possibilities are endless, you make up an offensive scenario and then sarcastically write “they aren’t kidding,” which subtly attributes your idea to the people who are developing augmented reality. It’s dishonest.

If this sounds off-putting, it’s worth noting that most assessments of the augmented-reality trend include the speculation that the hype will fade.

So you’re trying to put us off to augmented reality, and then reassure us that we have nothing to worry about since it won’t happen anyway. Then why write about this topic in the first place? If it’s not news, and it’s not interesting, what’s the point? And, “most assessments” is weasely. If you’ve got the goods, link to them, or at least name your sources.

…Why just look at a restaurant, a colleague or the “Mona Lisa,” when you can you can “augment” them all?

The scare quotes around the word ‘augment’ make it sound like you’re uncomfortable with using the word; as if it’s jargon. Expand your horizons! You don’t need to use quotes every time you learn a new word!

I don’t mean to pick you, NYT, but your articles about new technologies are sometimes rather irritating. Instead of writing with genuine interest and optimism about exciting new trends, you project a cynicism that hints at fear and confusion just beneath the surface.

(via DUB For the Future)

Telepresence: a good excuse to stay on Earth?

On 05, Sep 2009 | 3 Comments | In robotics, tactility | By David Birnbaum

In the New York Times, an article that cites the beautiful dream of telepresence to squash the equally beautiful dream of space colonization. Lame.

The Times on tactility

On 10, Dec 2008 | One Comment | In neuroscience, physiology, tactility | By David Birnbaum

The New York Times has published a piece on tactility and haptics. It’s pretty good, and it may inspire some readers to think more about the role touch plays in their lives. Many of the points made in the article are central to my research and my life, so a proper fisking in order. Let us begin.

Imagine you’re in a dark room, running your fingers over a smooth surface in search of a single dot the size of this period. How high do you think the dot must be for your finger pads to feel it? A hundredth of an inch above background? A thousandth?

I’m hooked. I have no idea, but I’m prepared to be surprised.

Well, take a tip from the economy and keep downsizing.

We dodge the silly reference to arrive at our answer:

Scientists have determined that the human finger is so sensitive it can detect a surface bump just one micron high. All our punctuation point need do, then, is poke above its glassy backdrop by 1/25,000th of an inch—the diameter of a bacterial cell—and our fastidious fingers can find it.

Wow! Seriously, that’s incredible. I wonder whether we can feel bacteria with our fingers in certain situations.

The human eye, by contrast, can’t resolve anything much smaller than 100 microns. No wonder we rely on touch rather than vision when confronted by a new roll of toilet paper and its Abominable Invisible Seam.

This is great—not the whimsical use of capital letters to catalog the phenomenology of toileting, but the comparison of tactile resolution to visual resolution. It’s a common misconception that touch is less precise than vision. After all, the visual processing we perform to communicate (i.e., reading and writing) can seem much more complex than touch. However vision isn’t necessarily more precise, it’s just more symbol-based, which makes it seem higher resolution because it is associated with complex concepts.

Biologically, chronologically, allegorically and delusionally, touch is the mother of all sensory systems. It is an ancient sense in evolution: even the simplest single-celled organisms can feel when something brushes up against them and will respond by nudging closer or pulling away.

I don’t understand “delusionally,” and really wonder what she means. However, it is interesting that simple animals can be said to feel by virtue of their ability to react to their environment, because it says a lot about the concept of feeling: that to feel is to be alive. Nicholas Humphrey wrote in Seeing Red, “The external world is the external world, and it is certainly useful to know what is going on out there. But let the animal never forget that the bottom line is its own bodily well-being, that I am nobody if I’m not me“.

It is the first sense aroused during a baby’s gestation and the last sense to fade at life’s culmination. Patients in a deep vegetative coma who seem otherwise lost to the world will show skin responsiveness when touched by a nurse.

The chronology of touch is crucial. As I’ve written before, touch frames experience. It sets the boundaries of your self, not only in space but also in time.

Like a mother, touch is always hovering somewhere in the perceptual background, often ignored, but indispensable to our sense of safety and sanity. “Touch is so central to what we are, to the feeling of being ourselves, that we almost cannot imagine ourselves without it,” said Chris Dijkerman, a neuropsychologist at the Helmholtz Institute of Utrecht University in the Netherlands. It’s not like vision, where you close your eyes and you don’t see anything. You can’t do that with touch. It’s always there.

Which, again, may say something about touch as a concept: that to live is to touch. Try to imagine that you would like to have the experience of “not touching anything.” You jump out of a plane, but realize that your body is being touched all over by air. You float in space (with no clothes on), and splay your limbs out so that you are sure to not even touch yourself. But sensory receptors throughout your body are still reflecting your body state—the angles of your joints, the stretch of your skin. Is it possible to conceive of a unfeeling animal?

Long neglected in favor of the sensory heavyweights of vision and hearing, the study of touch lately has been gaining new cachet among neuroscientists, who sometimes refer to it by the amiably jargony term of haptics, Greek for touch.

Haptics doesn’t mean touch. It relates to the perception and manipulation of one’s environment. And why is it amiable, because you said so?

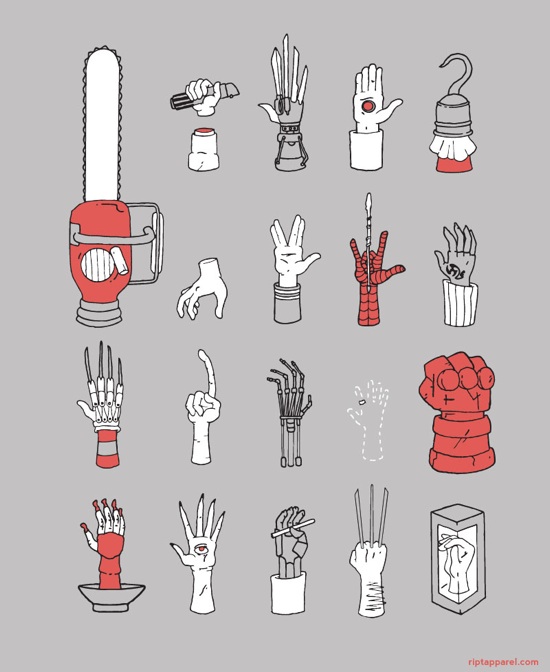

They’re exploring the implications of recently reported tactile illusions, of people being made to feel as though they had three arms, for example, or were levitating out of their bodies, with the hope of gaining insight into how the mind works. Others are turning to haptics for more practical purposes, to build better touch screen devices and robot hands, a more well-rounded virtual life.

It’s interesting that the author assumes studying tactile illusions is impractical. In fact, we haptic interface designers use tactile illusions all the time to achieve design goals.

There’s a fair amount of research into new ways of offloading information onto our tactile sense, said Lynette Jones of the Massachusetts Institute of Technology. To have your cellphone buzzing as opposed to ringing turned out to have a lot of advantages in some situations, and the question is, where else can vibrotactile cues be applied?âFor all its antiquity and constancy, touch is not passive or primitive or stuck in its ways. It is our most active sense, our means of seizing the world and experiencing it, quite literally, first hand. Susan J. Lederman, a professor of psychology at Queen’s University in Canada, pointed out that while we can perceive something visually or acoustically from a distance and without really trying, if we want to learn about something tactilely, we must make a move. We must rub the fabric, pet the cat, squeeze the Charmin.

I doubt that Lederman actually said those words, because they’re inaccurate and Lederman is one of the leading scientists in the field. Tactile sensations do not require movement, haptic perception does. Touch is incredibly hard to talk about. To do so successfully we must be particular about terminology.

And with every touchy foray, Heisenberg’s Uncertainty Principle looms large. Contact is a two-way street, and that’s not true for vision or audition, Dr. Lederman said. If you have a soft object and you squeeze it, you change its shape. The physical world reacts back.

This is also important—the act of touching necessarily produces change in the world (and thus, the context of experience), making it, at least figuratively, a singularity, or an infinitesimal feedback loop. However, I’m quite sure the Heisenberg uncertainty principle is not involved. Maybe the author knows that. Did she mean to use a difficult concept in particle physics as a metaphor for another difficult concept in perceptual science? If so, does this make the point clearer or more complicated?

Another trait that distinguishes touch is its widespread distribution. Whereas the sensory receptors for sight, vision, smell and taste are clustered together in the head, conveniently close to the brain that interprets the fruits of their vigils, touch receptors are scattered throughout the skin and muscle tissue and must convey their signals by way of the spinal cord. There are also many distinct classes of touch-related receptors: mechanoreceptors that respond to pressure and vibrations, thermal receptors primed to sense warmth or cold, kinesthetic receptors that keep track of where our limbs are, and the dread nociceptors, or pain receptors—nerve bundles with bare endings that fire when surrounding tissue is damaged.

The signals from the various touch receptors converge on the brain and sketch out a so-called somatosensory homunculus, a highly plastic internal representation of the body. Like any map, the homunculus exaggerates some features and downplays others. Looming largest are cortical sketches of those body parts that are especially blessed with touch receptors, which means our hidden homunculus has a clownishly large face and mouth and a pair of Paul Bunyan hands.

There’s another part of the sensory homunculus that’s clownishly large. Go there!

“Our hands and fingers are the tactile equivalent of the fovea in vision,” said Dr. Dijkerman, referring to the part of the retina where cone cell density is greatest and visual acuity highest. “If you want to explore the tactile world, your hands are the tool to use.”Our hands are brilliant and can do many tasks automatically—button a shirt, fit a key in a lock, touch type for some of us, play piano for others. Dr. Lederman and her colleagues have shown that blindfolded subjects can easily recognize a wide range of common objects placed in their hands. But on some tactile tasks, touch is all thumbs. When people are given a raised line drawing of a common object, a bas-relief outline of, say, a screwdriver, they’re stumped. ‘If all we’ve got is contour information,’ Dr. Lederman said, ‘no weight, no texture, no thermal information, well, we’re very, very bad with that.’

This makes sense. If you think about it, the visual qualities of an object have little in common with sensory qualities of the same stimulus perceived through a different sensory channel. Imagine that you took a two dimensional contour of a screwdriver and mapped the X and Y values to pitch and loudness, and then listened to the contour of the screwdriver. It wouldn’t sound much like a screwdriver. In fact, I would bet that if you had someone listen to that, and then listen to silence, she would say that the silence sounded more like a screwdriver than the contour.

Touch also turns out to be easy to fool. Among the sensory tricks now being investigated is something called the Pinocchio illusion. Researchers have found that if they vibrate the tendon of the biceps, many people report feeling that their forearm is getting longer, their hand drifting ever further from their elbow. And if they are told to touch the forefinger of the vibrated arm to the tip of their nose, they feel as though their nose was lengthening, too.

I want to try this!

Some tactile illusions require the collusion of other senses. People who watch a rubber hand being stroked while the same treatment is applied to one of their own hands kept out of view quickly come to believe that the rubber prosthesis is the real thing, and will wince with pain at the sight of a hammer slamming into it. Other researchers have reported what they call the parchment-skin illusion. Subjects who rubbed their hands together while listening to high-frequency sounds described their palms as feeling exceptionally dry and papery, as though their hands must be responsible for the rasping noise they heard. Look up, little Pinocchio! Somebody’s pulling your strings.

It’s great to see that ordinary journalists are curious about tactility. The article includes many of my own talking points about touch, which is cool. Of course, the way to make someone really appreciate touch is by hugging or hitting them. Alas, writing about touch will have to do for now, until haptic technology makes its glorious arrival.