neuroscience

Brilliant Italian scientists successfully recombine work and pleasure

On 25, Aug 2011 | No Comments | In music, neuroscience, perception | By Dave

A study provides evidence that talking into a person’s right ear can affect behavior more effectively than talking into the left.

One of the best known asymmetries in humans is the right ear dominance for listening to verbal stimuli, which is believed to reflect the brain’s left hemisphere superiority for processing verbal information.

I heavily prefer my left ear for phone calls. So much so that I have trouble understanding people on the phone when I use my right ear. Should I be concerned that my brain seems to be inverted?

Read on and it becomes clear that going beyond perceptual psychology, the scientists are terrifically shrewd:

Tommasi and Marzoli’s three studies specifically observed ear preference during social interactions in noisy night club environments. In the first study, 286 clubbers were observed while they were talking, with loud music in the background. In total, 72 percent of interactions occurred on the right side of the listener. These results are consistent with the right ear preference found in both laboratory studies and questionnaires and they demonstrate that the side bias is spontaneously displayed outside the laboratory.

In the second study, the researchers approached 160 clubbers and mumbled an inaudible, meaningless utterance and waited for the subjects to turn their head and offer either their left of their right ear. They then asked them for a cigarette. Overall, 58 percent offered their right ear for listening and 42 percent their left. Only women showed a consistent right-ear preference. In this study, there was no link between the number of cigarettes obtained and the ear receiving the request.

In the third study, the researchers intentionally addressed 176 clubbers in either their right or their left ear when asking for a cigarette. They obtained significantly more cigarettes when they spoke to the clubbers’ right ear compared with their left.

I’m picturing the scientists using their grant money to pay cover at dance clubs and try to obtain as many cigarettes as possible – carefully collecting, then smoking, their data – with the added bonus that their experiment happens to require striking up conversation with clubbers of the opposite sex who are dancing alone. One assumes that, if the test subject happened to be attractive, once the cigarette was obtained (or not) the subject was invited out onto the terrace so the scientist could explain the experiment and his interesting line of work. Well played!

Learning nouns activates separate brain region from learning verbs

On 11, Aug 2011 | No Comments | In cognition, language, neuroscience | By Dave

Another MRI study, this time investigating how we learn parts of speech:

The test consisted of working out the meaning of a new term based on the context provided in two sentences. For example, in the phrase “The girl got a jat for Christmas” and “The best man was so nervous he forgot the jat,” the noun jat means “ring.” Similarly, with “The student is nising noodles for breakfast” and “The man nised a delicious meal for her” the hidden verb is “cook.”

“This task simulates, at an experimental level, how we acquire part of our vocabulary over the course of our lives, by discovering the meaning of new words in written contexts,” explains Rodríguez-Fornells. “This kind of vocabulary acquisition based on verbal contexts is one of the most important mechanisms for learning new words during childhood and later as adults, because we are constantly learning new terms.”

The participants had to learn 80 new nouns and 80 new verbs. By doing this, the brain imaging showed that new nouns primarily activate the left fusiform gyrus (the underside of the temporal lobe associated with visual and object processing), while the new verbs activated part of the left posterior medial temporal gyrus (associated with semantic and conceptual aspects) and the left inferior frontal gyrus (involved in processing grammar).

This last bit was unexpected, at first. I would have guessed that verbs would be learned in regions of the brain associated with motor action. But according to this study, verbs seem to be learned only as grammatical concepts. In other words, knowledge of what it means to run is quite different than knowing how to run. Which makes sense if verb meaning is accessed by representational memory rather than declarative memory.

Skin receptors may contribute to emotion

On 02, Jan 2010 | No Comments | In language, neuroscience, perception, physiology | By Dave

Interoception, the perception of internal feelings, is a funny thing. From our point of view as feeling beings, it seems entirely distinct from exteroceptive channels (sight, hearing, and so on). Interoception is also thought to be how we feel emotions, in addition to bodily functions. When you feel either hungry or lovesick, you are perceiving the state of your internal body, organs, and metabolism. A few years ago it was discovered that there are neural pathways for interoception distinct from ones used to perceive the outside world.

Interesting new research suggests that mechanical skin disturbances caused by pulsating blood vessels may significantly contribute to your perception of your own heartbeat. This is important because it means that skin may play a larger role in emotion than has been previously thought.

The researchers found that, in addition to a pathway involving the insular cortex of the brain — the target of most recent research on interoception — an additional pathway contributing to feeling your own heartbeat exists. The second pathway goes from fibers in the skin to most likely the somatosensory cortex, a part of the brain involved in mapping the outside of the body and the sense of posture.

This sounds surprising at first, but it makes perfect sense. There have been other instances where the functionality of perceptual systems overlap. For example, it’s been found that skin receptors contribute to kinesthesia: as the joints bend, sensations of skin stretch are used to perceive of joint angles. This was also somewhat surprising at the time, because it was thought that perception of one’s joint angles arose out of the receptors in the joints themselves, exclusively. The same phenomenon, of skin movement being incidentally involved in some other primary action, is at work here. We might be able to say that any time the skin is moved perceptibly, cutaneous signals are bound up with the percept itself.

In fact, I think this may be a good object lesson in how words about feelings can be very confusing. A few years ago, before the recent considerable progress in mapping the neural signature of interoception, the word ‘interoception’ was used to describe a class of perceptions—ones whose object was the perceiver. Interoception meant the perception of bodily processes: heartbeat, metabolic functioning, and so on. When scientists discovered a neural pathway that serves only this purpose, the word suddenly began to refer not to the perceptual modality, but exclusively to that neural pathway. Now that multiple pathways have been identified, the word will go back to its original meaning: a class of percepts, rather than a particular neural conduit.

Gestures and words are neurologically similar

On 13, Nov 2009 | No Comments | In gesture, language, neuroscience | By Dave

Current thinking in the study of language is that, like a smart search engine that pops up the most suitable Web site at the top of its search results, the posterior temporal region serves as a storehouse of words from which the inferior frontal gyrus selects the most appropriate match. The researchers suggest that, rather than being limited to deciphering words alone, these regions may be able to apply meaning to any incoming symbols, be they words, gestures, images, sounds, or objects.

It doesn’t surprise me that a widely held theory of language is based on our understanding of how search engines work, because we tend to conceptualize our world with metaphors based on technology. But this suggests that many of our abstract theories might be pinned to planned obsolescence schedules, which is kind of amusing.

"The modified modifier"

On 14, Sep 2009 | 2 Comments | In art, neuroscience | By David Birnbaum

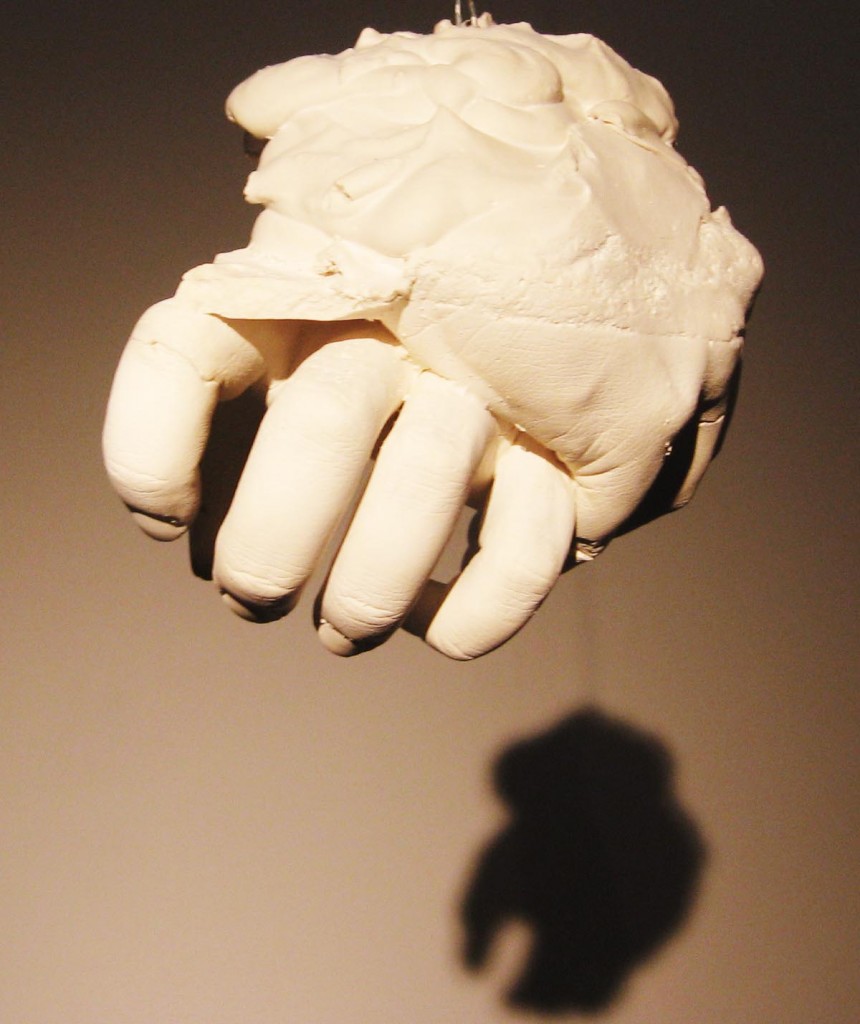

I recently found a mysterious and intriguing blog called Art Is Dead Long Live Art. The author, Erin Voth, explores transmutations of hand and touch.

Hands are the chief organs for physically manipulating the environment. The fingertips contain some of the densest areas of nerve endings in the human body, creating the richest source of tactile feedback to the brain. The image of a human hand is automatically associated with the scene [sic] of touch. So what happens when an image of a human hand is manipulated, sliced up and rearranged?

The modified modifier invokes images of self mutilation, alteration and airbrushing.

…The somatosensory system is a widespread and diverse sensory system comprising of the receptors and processing centres to produce the sensory modalities touch, temperature, body position, and pain. The sensory receptors cover the skin and epithelia, skeletal muscles, bones and joints, internal organs, and the cardiovascular system. While touch is considered one of the five traditional senses the impression of touch is formed from several modalities.

The system reacts to diverse stimuli using different receptors: temperature, mechanical and chemical. Transmission of information from the receptors passes via sensory nerves through tracts in the spinal cord and into the brain. Processing primarily occurs in the primary somatosensory area in the parietal lobe of the cerebral cortex.

At its simplest, the system works when a sensory neuron is triggered by a specific stimulus such as heat; this neuron passes to an area in the brain uniquely attributed to that area on the body—this allows the processed stimulus to be felt at the correct location. The mapping of the body surfaces in the brain is called a homunculus and is essential in the creation of a body image.

…

[James] Gibson and others emphasized the close link between haptic perception and body movement: haptic perception is active exploration. The concept of haptic perception is related to the concept of extended physiological proprioception according to which, when using a tool such as a stick, perceptual experience is transparently transferred to the end of the tool.

Put yourself in my position

On 31, Jul 2009 | No Comments | In cognition, neuroscience | By David Birnbaum

…so you can understand how I feel:

“Our language is full of spatial metaphors, particularly when we attempt to explain or understand how other people think or feel. We often talk about putting ourselves in others’ shoes, seeing something from someone else’s point of view, or figuratively looking over someone’s shoulder,” Sohee Park, report co-author and professor of psychology, said. “Although future work is needed to elucidate the nature of the relationship between empathy, spatial abilities and their potentially overlapping neural underpinnings, this work provides initial evidence that empathy might be, in part, spatially represented.”

“We use spatial manipulations of mental representations all the time as we move through the physical world. As a result, we have readily available cognitive resources to deploy in our attempts to understand what we see. This may extend to our understanding of others’ mental states,” Katharine N. Thakkar, a psychology graduate student at Vanderbilt and the report’s lead author, said. “Separate lines of neuroimaging research have noted involvement of the same brain area, the parietal cortex, during tasks involving visuo-spatial processes and empathy.”

The Hand

On 04, Jun 2009 | One Comment | In art, books, cognition, neuroscience, physiology, tactility | By David Birnbaum

The Hand by Frank Wilson is a rare treat. It runs the gamut from anthropology (both the cultural and evolutionary varieties), to psychology, to biography. Wilson interviews an auto mechanic, a pupeteer, a surgeon, a physical therapist, a rock climber, a magician, and others—all with the goal of understanding the extent to which the human hand defines humanness.

The Hand by Frank Wilson is a rare treat. It runs the gamut from anthropology (both the cultural and evolutionary varieties), to psychology, to biography. Wilson interviews an auto mechanic, a pupeteer, a surgeon, a physical therapist, a rock climber, a magician, and others—all with the goal of understanding the extent to which the human hand defines humanness.

Wilson is a neurologist who works with musicians who have been afflicted with debilitating chronic hand pain. As he writes about his many interviews, a few themes emerge that are especially relevant to our interests here.

Incorporation

Incorporation is the phenomenon of internalizing external objects; it’s the feeling that we all get that a tool has become one with our body.

The idea of “becoming one” with a backhoe is no more exotic than the idea of a rider becoming one with a horse or a carpenter becoming one with a hammer, and this phenomenon itself may take its origin from countless monkeys who spent countless eons becoming one with tree branches. The mystical feel comes from the combination of a good mechanical marriage and something in the nervous system that can make an object external to the body feel as if it had sprouted from the hand, foot, or (rarely) some other place on the body where your skin makes contact with it…

The contexts in which this bonding occurs are so varied that there is no single word that adequately conveys either the process or the many variants of its final form. One term that might qualify is “incorporation”—bringing something into, or making it part of, the body. It is a commonplace experience, familiar to anyone who has ever played a musical instrument, eaten with a fork or chopsticks, ridden a bicycle, or driven a car. (p. 63)

Projection

Projection is the ability to use the hand as a bridge for projecting consciousness from one location to another. (Wilson did not use the word “projection” in the book.) In some ways, projection can be seen as the opposite of incorporation. Master puppeteer Anton Bachleitner:

It takes at least three years of work to say you are a puppeteer. The most difficult job technically is to be able to feel the foot contact the floor as it actually happens. The only way to make the puppet look as though it is actually walking is by feeling what is happening through your hands. The other thing which I think you cannot really train for, but only can discover with very long practice and experience, is a change in your own vision.The best puppeteer after some years will actually see what is happening on the stage as if he himself was located in the head of the puppet, looking out through the puppet’s eyes—he must learn to be in the puppet. This is true not only in the traditional actor’s sense, but in an unusual perceptual sense. The puppeteer stands two meters above the puppet and must be able to see what is on the stage and to move from the puppet’s perspective. Moving is a special problem because of this distance, because the puppet does not move at the same time your hand does. Also, there can be several puppets on the stage at the same time, and to appear realistic they must react to each other as they would in real life. So again the puppeteer must himself be mentally on the stage and able to react as a stage actor would react. This is something I cannot explain, but it is very imprortant for a puppeteer to be able to do this. (pp. 92–93)

Serge Percelly, professional juggler:

[An act is successful] not because you put something in the act that’s really difficult, but because you put something in the act in exactly the right way—in a way that makes it more interesting, not only for me but for the audience as well. I’m just trying somehow to do the act that I would have loved to see. (p. 111)

Skill

Wilson is a musician and a doctor to musicians, so he has special insight into the neurology of musical skill—which he recognizes as special case of manual skill that involves gesture, communication, and emotion.

Musical skill provides the clearest example and the cleanest proof of the existence of a whole class of self-defined, personally distinctive motor skills with an extended training and experience base, strong ties to the individual’s emotional and cognitive development, strong communicative intent, and very high performance standards. Musical skill, in other words, is more than simply praxis, ordinary manual dexterity, or expertness in pantomime. (p. 207)

The upper-limb (or “output”) requirements for an instrumentalist are not unique either; they depend upon the possession of arms, fingers, and thumbs, specific but idiosyncratic limits on the rage of motion at the shoulder, elbow, wrist, hand, and finger joints, variable abilities to achieve repetition rates and forces with specific digital configurations in sequence at multiple contact points on a sound-making device, and so on. Peculiarities in the physical configuration and movement capabisities of the musician’s limbs can be an advantage or disadvantage but are reflected in (and in adverse cases can be overcome through) instrument design: How wide can you make the neck of a guitar? How far apart should the keys be on a piano? Where should the keys be placed on a flute—in general? and for Susan and Peter? (p. 225)

Awareness

Touch experience can be a gateway to awareness, which can in turn heal both the mind and the body. Moshe Feldenkrais invented a form of physical therapy that focuses on stimulating an awareness of touch and movement sensations in order to relieve pain.

Most people slouch, tilt, shuffle, twist, stumble, and hobble along. Why should that be? Was there something wrong with their brains? After considering what dancers and musicians go through to improve control of their movements, [Feldenkrais] guessed that people must either be ignorant of the possibilities or refuse to act on them. So they just heave themselves around, lurching from parking place to office to parking place, utterly oblivious to what they are doing, to their appearance, and even to the sensations that arise from bodily movement. He suspected that people just lose contact with their own bodies. If and when they do notice, it is because they are so stiff that they can’t get out of bed or are in so much pain that they can barely get out of a chair. Then they start noticing…

What [Feldenkrais] was doing did not seem complicated. The goal of the guided movements was not to learn how to move, in the sense of learning to do a new dance step. The goal was not to stretch ligaments or muscles. It was not to increase strength. The goal, as he saw it, was to get the messages moving again and to encourage the brain to pay attention to them. (p. 244)

And his student, Anat Baniel, on the deep psychological roots of movement disorders:

I think working with children has given me this idea, which isn’t often discussed in medicine: a lot of disease—medical disease and emotional “dis-ease”—is an outcome of a lack of full development. It’s not something we can get to just by removing a psychological block…

Of course there are problems due to traumatic events in childhood, or disease—you name it. Feldenkrais said that ideal development would happen if the child was not opposed by a force too big for its strength. When you say to a small child, “Don’t touch that, it’s dangerous!” you create such a forceful inhibition that you actually distort the child’s movement, and growth, in a certain way.

Feldenkrais taught us to look for what isn’t there. Why doesn’t movement happen in the way that it should, given gravity, given the structure of the body, given the brain? For all of us there is a sort of sphere, or range, of movement that should be possible. Some people get only five or ten percent of that sphere, and you have to ask, “What explains the difference between those who get very little and those who get a lot?” Feldenkrais said that the difference is that in the process of development, the body encountered forces that were disproportionate to what the nervous system could absorb without becoming overinhibited—or overly excited, which is a manifestation of the same thing. (p. 252)

Feldenkrais’s approach is fascinating, but there is scant discussion in Wilson’s book about the role of the therapist’s hand in this process. After all, this kind of therapy is wholly reliant on an accidental discovery: that the patient can be made aware of her own body through an external, expert hand radiating pressure and heat. How is this possible? The topic isn’t explored.

There are many, many wonderful things to learn from this book for anyone with an interest in biology, art, music, history, or sports. You can find Frank Wilson on the web at Handoc.com

Turn a female rat into a male by rubbing its tummy

On 13, Jan 2009 | No Comments | In neuroscience | By David Birnbaum

(via Althouse)

The Times on tactility

On 10, Dec 2008 | One Comment | In neuroscience, physiology, tactility | By David Birnbaum

The New York Times has published a piece on tactility and haptics. It’s pretty good, and it may inspire some readers to think more about the role touch plays in their lives. Many of the points made in the article are central to my research and my life, so a proper fisking in order. Let us begin.

Imagine you’re in a dark room, running your fingers over a smooth surface in search of a single dot the size of this period. How high do you think the dot must be for your finger pads to feel it? A hundredth of an inch above background? A thousandth?

I’m hooked. I have no idea, but I’m prepared to be surprised.

Well, take a tip from the economy and keep downsizing.

We dodge the silly reference to arrive at our answer:

Scientists have determined that the human finger is so sensitive it can detect a surface bump just one micron high. All our punctuation point need do, then, is poke above its glassy backdrop by 1/25,000th of an inch—the diameter of a bacterial cell—and our fastidious fingers can find it.

Wow! Seriously, that’s incredible. I wonder whether we can feel bacteria with our fingers in certain situations.

The human eye, by contrast, can’t resolve anything much smaller than 100 microns. No wonder we rely on touch rather than vision when confronted by a new roll of toilet paper and its Abominable Invisible Seam.

This is great—not the whimsical use of capital letters to catalog the phenomenology of toileting, but the comparison of tactile resolution to visual resolution. It’s a common misconception that touch is less precise than vision. After all, the visual processing we perform to communicate (i.e., reading and writing) can seem much more complex than touch. However vision isn’t necessarily more precise, it’s just more symbol-based, which makes it seem higher resolution because it is associated with complex concepts.

Biologically, chronologically, allegorically and delusionally, touch is the mother of all sensory systems. It is an ancient sense in evolution: even the simplest single-celled organisms can feel when something brushes up against them and will respond by nudging closer or pulling away.

I don’t understand “delusionally,” and really wonder what she means. However, it is interesting that simple animals can be said to feel by virtue of their ability to react to their environment, because it says a lot about the concept of feeling: that to feel is to be alive. Nicholas Humphrey wrote in Seeing Red, “The external world is the external world, and it is certainly useful to know what is going on out there. But let the animal never forget that the bottom line is its own bodily well-being, that I am nobody if I’m not me“.

It is the first sense aroused during a baby’s gestation and the last sense to fade at life’s culmination. Patients in a deep vegetative coma who seem otherwise lost to the world will show skin responsiveness when touched by a nurse.

The chronology of touch is crucial. As I’ve written before, touch frames experience. It sets the boundaries of your self, not only in space but also in time.

Like a mother, touch is always hovering somewhere in the perceptual background, often ignored, but indispensable to our sense of safety and sanity. “Touch is so central to what we are, to the feeling of being ourselves, that we almost cannot imagine ourselves without it,” said Chris Dijkerman, a neuropsychologist at the Helmholtz Institute of Utrecht University in the Netherlands. It’s not like vision, where you close your eyes and you don’t see anything. You can’t do that with touch. It’s always there.

Which, again, may say something about touch as a concept: that to live is to touch. Try to imagine that you would like to have the experience of “not touching anything.” You jump out of a plane, but realize that your body is being touched all over by air. You float in space (with no clothes on), and splay your limbs out so that you are sure to not even touch yourself. But sensory receptors throughout your body are still reflecting your body state—the angles of your joints, the stretch of your skin. Is it possible to conceive of a unfeeling animal?

Long neglected in favor of the sensory heavyweights of vision and hearing, the study of touch lately has been gaining new cachet among neuroscientists, who sometimes refer to it by the amiably jargony term of haptics, Greek for touch.

Haptics doesn’t mean touch. It relates to the perception and manipulation of one’s environment. And why is it amiable, because you said so?

They’re exploring the implications of recently reported tactile illusions, of people being made to feel as though they had three arms, for example, or were levitating out of their bodies, with the hope of gaining insight into how the mind works. Others are turning to haptics for more practical purposes, to build better touch screen devices and robot hands, a more well-rounded virtual life.

It’s interesting that the author assumes studying tactile illusions is impractical. In fact, we haptic interface designers use tactile illusions all the time to achieve design goals.

There’s a fair amount of research into new ways of offloading information onto our tactile sense, said Lynette Jones of the Massachusetts Institute of Technology. To have your cellphone buzzing as opposed to ringing turned out to have a lot of advantages in some situations, and the question is, where else can vibrotactile cues be applied?âFor all its antiquity and constancy, touch is not passive or primitive or stuck in its ways. It is our most active sense, our means of seizing the world and experiencing it, quite literally, first hand. Susan J. Lederman, a professor of psychology at Queen’s University in Canada, pointed out that while we can perceive something visually or acoustically from a distance and without really trying, if we want to learn about something tactilely, we must make a move. We must rub the fabric, pet the cat, squeeze the Charmin.

I doubt that Lederman actually said those words, because they’re inaccurate and Lederman is one of the leading scientists in the field. Tactile sensations do not require movement, haptic perception does. Touch is incredibly hard to talk about. To do so successfully we must be particular about terminology.

And with every touchy foray, Heisenberg’s Uncertainty Principle looms large. Contact is a two-way street, and that’s not true for vision or audition, Dr. Lederman said. If you have a soft object and you squeeze it, you change its shape. The physical world reacts back.

This is also important—the act of touching necessarily produces change in the world (and thus, the context of experience), making it, at least figuratively, a singularity, or an infinitesimal feedback loop. However, I’m quite sure the Heisenberg uncertainty principle is not involved. Maybe the author knows that. Did she mean to use a difficult concept in particle physics as a metaphor for another difficult concept in perceptual science? If so, does this make the point clearer or more complicated?

Another trait that distinguishes touch is its widespread distribution. Whereas the sensory receptors for sight, vision, smell and taste are clustered together in the head, conveniently close to the brain that interprets the fruits of their vigils, touch receptors are scattered throughout the skin and muscle tissue and must convey their signals by way of the spinal cord. There are also many distinct classes of touch-related receptors: mechanoreceptors that respond to pressure and vibrations, thermal receptors primed to sense warmth or cold, kinesthetic receptors that keep track of where our limbs are, and the dread nociceptors, or pain receptors—nerve bundles with bare endings that fire when surrounding tissue is damaged.

The signals from the various touch receptors converge on the brain and sketch out a so-called somatosensory homunculus, a highly plastic internal representation of the body. Like any map, the homunculus exaggerates some features and downplays others. Looming largest are cortical sketches of those body parts that are especially blessed with touch receptors, which means our hidden homunculus has a clownishly large face and mouth and a pair of Paul Bunyan hands.

There’s another part of the sensory homunculus that’s clownishly large. Go there!

“Our hands and fingers are the tactile equivalent of the fovea in vision,” said Dr. Dijkerman, referring to the part of the retina where cone cell density is greatest and visual acuity highest. “If you want to explore the tactile world, your hands are the tool to use.”Our hands are brilliant and can do many tasks automatically—button a shirt, fit a key in a lock, touch type for some of us, play piano for others. Dr. Lederman and her colleagues have shown that blindfolded subjects can easily recognize a wide range of common objects placed in their hands. But on some tactile tasks, touch is all thumbs. When people are given a raised line drawing of a common object, a bas-relief outline of, say, a screwdriver, they’re stumped. ‘If all we’ve got is contour information,’ Dr. Lederman said, ‘no weight, no texture, no thermal information, well, we’re very, very bad with that.’

This makes sense. If you think about it, the visual qualities of an object have little in common with sensory qualities of the same stimulus perceived through a different sensory channel. Imagine that you took a two dimensional contour of a screwdriver and mapped the X and Y values to pitch and loudness, and then listened to the contour of the screwdriver. It wouldn’t sound much like a screwdriver. In fact, I would bet that if you had someone listen to that, and then listen to silence, she would say that the silence sounded more like a screwdriver than the contour.

Touch also turns out to be easy to fool. Among the sensory tricks now being investigated is something called the Pinocchio illusion. Researchers have found that if they vibrate the tendon of the biceps, many people report feeling that their forearm is getting longer, their hand drifting ever further from their elbow. And if they are told to touch the forefinger of the vibrated arm to the tip of their nose, they feel as though their nose was lengthening, too.

I want to try this!

Some tactile illusions require the collusion of other senses. People who watch a rubber hand being stroked while the same treatment is applied to one of their own hands kept out of view quickly come to believe that the rubber prosthesis is the real thing, and will wince with pain at the sight of a hammer slamming into it. Other researchers have reported what they call the parchment-skin illusion. Subjects who rubbed their hands together while listening to high-frequency sounds described their palms as feeling exceptionally dry and papery, as though their hands must be responsible for the rasping noise they heard. Look up, little Pinocchio! Somebody’s pulling your strings.

It’s great to see that ordinary journalists are curious about tactility. The article includes many of my own talking points about touch, which is cool. Of course, the way to make someone really appreciate touch is by hugging or hitting them. Alas, writing about touch will have to do for now, until haptic technology makes its glorious arrival.

Embodied music cognition

On 08, Oct 2008 | No Comments | In books, cognition, music, neuroscience | By David Birnbaum

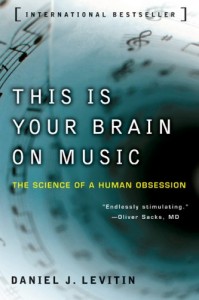

This is Your Brain on Music is a great introductory book on the neuroscience of music. Although I found it weighted a bit too much toward popular science for my liking, that was its stated purpose, and there was still plenty of good information in it.

This is Your Brain on Music is a great introductory book on the neuroscience of music. Although I found it weighted a bit too much toward popular science for my liking, that was its stated purpose, and there was still plenty of good information in it.

Here we have an explanation of musical timing as an analogy for a moving body:

Virtually every culture and civilization considers movement to be an integral part of music making and listening. Rhythm is what we dance to, sway our bodies to, and tap our feet to… It is no coincidence that making music requires the coordinated, rhythmic use of our bodies, and that energy be transmitted from body movements to a musical instrument. (57)

‘Tempo’ refers to the pace of a musical piece—how quickly or slowly it goes by. If you tap your foot or snap your fingers in time to a piece of music, the tempo of the piece will be directly related to how fast or slow you are tapping. If a song is a living, breathing entity, you might think of the tempo as its gait—the rate at which it walks by—or its pulse—the rate at which the heart of the song is beating. The word ‘beat’ indicates the basic unit of measurement in a musical piece; this is also called the ‘tactus’. Most often, this is the natural point at which you would tap your foot or clap your hands or snap your fingers. (59)

Levitin also delves into the possible evolutionary reasons for music, noting that music seems to always go with dance, and that the concept of the expert musical performer is very recent:

When we ask about the evolutionary basis for music, it does no good to think about Britney or Bach. We have to think about what music was like around fifty thousand years ago. The instruments recovered from archeological sites can help us understand what our ancestors used to make music, and what kinds of melodies they listened to. Cave paintings, paintings on stoneware, and other pictorial artifacts can tell us something about the role that music played in daily life. We can also study contemporary societies that have been cut off from civilization as we know it, groups of people who are living in hunter-gatherer lifestyles that have remained unchanged for thousands of years. One striking find is that in every society of which we’re aware, music and dance are inseparable.The arguments against music as an adaptation consider music only as disembodied sound, and moreover, as performed by an expert class for an audience. But it is only in the last five hundred years that music has become a spectator activity—the thought of a musical concert in which a class of “experts” performed for an appreciative audience was virtually unknown throughout our history as a species. And it has only been in the last hundred years or so that the ties between musical sound and human movement have been minimized. The embodied nature of of music, the indivisibility of movement and sound, the anthropologist John Blacking writes, characterizes music across cultures and across times. (257)

I agree. Even though we may use modern technology to exploit musical cognitive faculties for maximum effect, the idea that music/dance is a counter-evolutionary accident seems wrong to me.

You can find the website that accompanies the book at yourbrainonmusic.com.

Targeted reinnervation

On 28, Sep 2008 | No Comments | In neuroscience, physiology, transhumanism | By David Birnbaum

A woman’s nerves have been rewired to help her control a prosthetic limb, an experimental procedure for amputees called targeted reinnervation. It’s a fascinating concept, and it works: a noncritical muscle’s nerves are deactivated, and the severed efferent (motor) nerve fibers from the missing limb are inserted into the muscle. The brain can then control a prosthesis by sending motor signals to the muscle. Additionally, the afferent (sensory) nerve fibers from the severed limb are moved to the skin above the same muscle. Stimulation of those nerves are now mapped as sensation originating from the prosthesis. Claudia Mitchell can control her prosthetic arm by sending motor signals to her chest muscle, and experiences cutaneous sensations in her prosthetic arm when the skin on her chest is touched or its temperature is changed.

Of course, rather than simply explaining the news in as clear a way as possible, ABC proceeds to extremes: “Mitchell has become the first real ‘Bionic Woman’: part human, part computer.” She’s first and she’s real, and you can tell because ABC even awarded her the official capitalized title of “Bionic Woman.” Presumptuous, and also inaccurate. In fact, this technology is exciting because it doesn’t have much to do with computers at all. Rather than relying on predictive software to control the motors in the prosthesis (which was the technique used in this BBC producer’s prosthetic foot), Ms. Mitchell controls her hardware directly, with her brain.

In any case, the success of this procedure has led to some interesting discoveries, such as the fact that Ms. Mitchell retains a 1-to-1 mapping of her reinnervated afferent fibers to locations on her prosthesis.

Paul Marasco, a touch specialist and research scientist with the Rehabilitation Institute of Chicago, was brought in to study the hand sensations that Mitchell feels in her chest. He put together a detailed map, connecting what Mitchell’s missing hand feels with the corresponding locations on her chest.

Depending on where you touch her chest, “she has the distinct sense of her joints being bent back in particular ways, and she has feelings of her skin being stretched,” Marasco said.

If a human’s nervous system can be extended to include a prosthesis, it isn’t a stretch to imagine that it can be interfaced with external signal networks, such as other humans’ nervous systems, or the internet. How will this affect embodied cognition? Societal structure? Consciousness?

Here’s a video of Claudia in action. Seems like she’s got style too—the upper part of her artificial arm is covered in a camoflauge pattern. Seen!

How many neurons make a feeling?

On 28, Mar 2008 | No Comments | In neuroscience, tactility | By David Birnbaum

One:

The Dutch and German study, published in Nature, found that stimulating just one rat neuron could deliver the sensation of touch.

“The generally accepted model was that networks or arrays make decisions and that the influence of a single neuron is smaller, but this work and other recent studies support a more important role for the individual neuron.

“These studies drive down the level at which relevant computation is happening in the brain.”

I think it also supports the idea (discussed in detail in my thesis) that the word “touch” serves as a baseline indicator for subjective experience.

Whiskers as haptic sensor arrays

On 26, Feb 2008 | No Comments | In cognition, neuroscience, physiology, robotics | By David Birnbaum

Whiskers provide animals with complex perceptual content. In fact, all the things that whiskers actually do are fascinating.

The dimensionality of the data can be modeled according to how an animal moves them through space:

Rat whiskers move actively in one dimension, rotating at their base in a plane roughly parallel to the ground. When the whiskers hit an object, they can be deflected backwards, upwards or downwards by contact with the object. The mechanical bending of the whisker activates many thousands of sensory receptors located in the follicle at the whisker base. The receptors, in turn, send neural signals to the brain, where a three-dimensional image is presumably generated.Hartmann and Solomon showed that their robotic whiskers could extract information about object shape by “whisking” (sweeping) the whiskers across a small sculpted head, which was chosen specifically for its complex shape. As the whiskers move across the object, strain gauges sense the bending of the whiskers and thus determine the location of different points on the head. A computer program then “connects the dots†to create a three-dimensional representation of the object.

More on that “three-dimensional image” from the end of the first paragraph — whiskers indeed construct a high resolution spatial map:

Based on discoveries in primates and cats, scientists previously thought that highly refined maps representing the complexities of the external world were the exclusive domain of the visual cortex in mammals. This new map is a miniature schematic, representing the direction a whisker is moved when it brushes against an object.“This study is a great counter example to the prevailing view that only the visual cortex has beautiful, overlapping, multiplexed maps,” said Christopher Moore, a principal investigator at the McGovern Institute and an assistant professor in the Department of Brain and Cognitive Sciences, where he holds the Mitsui Career Development Chair.

Researchers are now working towards developing code for a whisker-like sensor array to be used for robotics. Could this software have human interface applications as well?

This reminds me of the impressive and thought-provoking Haptic Radar/Extended Skin Project. Although the sensing medium in that case was ultrasound rather than a deformable, physical substrate, and the resolution of the stimulators much lower, the researchers state that they intend to make the system more whisker-like as they develop it.

[via Science Daily]