mobility

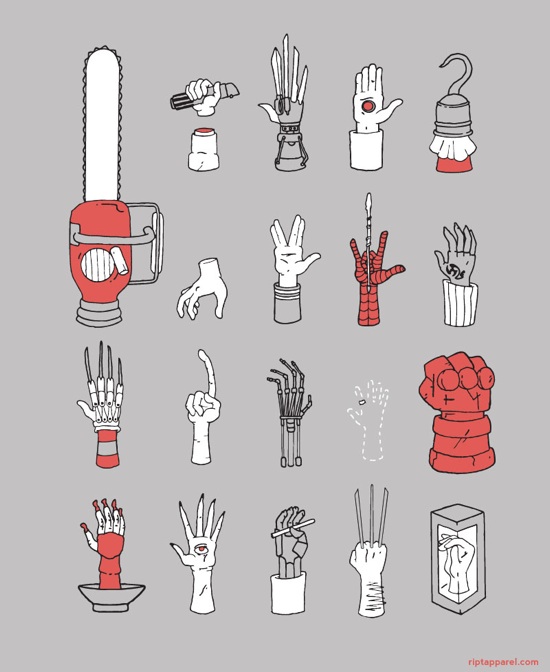

Smartphone as prosthesis

On 27, Sep 2011 | One Comment | In transhumanism | By Dave

Noticing that many of the same sensors, silicon, and batteries used in smartphones are being used to create smarter artificial limbs, Fast Company draws the conclusion that the market for smartphones is driving technology development useful for bionics. While interesting enough, the article doesn’t continue to the next logical and far more interesting possibility: that phones themselves are becoming parts of our bodies. To what extent are smartphones already bionic organs, and how could we tell if they were? I’m actively researching design in this area – stay tuned for more about the body-incorporated phone.

What can gardens teach us about digitality?

On 20, Dec 2009 | One Comment | In sociology, tactility | By Dave

The Washington Post has an intriguing piece about a book dealing with gardens (of all things) and digitality. The author, Robert Harrison, argues that gardens immerse us in place and time, and that digital devices do not. The article jumps all over the place, talking about mobile communication, cultural anthropology, and evolution, but it makes several important points.

To start, attending to digital devices is said to preclude being present:

“You know you have crossed the river into Cyberland when the guy coming your way has his head buried in the hand-held screen. He will knock into you unless you get out of his way, and don’t expect an apology. It’s as if you aren’t there. Maybe you’re not.”

I’m very interested in language like this, because it’s a metaphor in the process of becoming a literalism. Today, saying that you’re not there because you’re looking at a device is metaphorical, but I think that the meaning of ‘being there’ is going to change to mean where you are engaged, no matter where its geographical location is in relation to you. “I’ll be right there!” he said as he plugged his brain into the internet. Moments later he was standing in the garden…

The article quotes a study that claims that the average adult spends 8.5 hours a day visually engaged with a screen. 24-hour days, split up by 8.5 hours of screen and 8 hours of sleep—the Screen Age really does deserve its own delineation. It’s a significant and unique period in human history.

And just like sleep, perhaps disturbingly so, people looking at screens can resemble dead people (or, more accurately, un-dead people):

…We have become digital zombies.

But I think the resemblance is entirely superficial. Sure, if you only go by appearances, an army of screen-starers is a frightening sight to imagine. But scratch the surface and you realize that screen-staring is a far cry from zombism. The social spaces we are constructing while we stare, the vast data stores we are integrating—these activities remind me of life. Teeming life. Our bodies may be sedentary, our eyes fixed on a single glowing rectangle, but what is going on is indisputably amazing. On the microscopic level there are billions of electrical fluctuations per moment, both in our brains and our machines, and they are actively correlating and adapting to each other. Patterns of thought are encoded in a vast network of micro-actions and reactions that span the planet. And what is it like for you when you stare at a computer or phone screen? You juggle complex, abstract symbolic information at speeds never before achieved by human brains, and you’re also inputting—emitting—hundreds of symbols with the precise motor skills of your fingers. You are recognizing pictures and signs, searching for things, finding them, figuring stuff out, adjusting your self image, and nurturing your dreams. There is no loss of dignity or life in this. But I admit that we all look like zombies while we do it, and I suppose that is pretty weird.

The article goes on to quote author Katherine Hayles, who says she thinks humans are in a state of symbiosis with their computers:

“If every computer were to crash tomorrow, it would be catastrophic,” she says. “Millions or billions of people would die. That’s the condition of being a symbiont.”

Let that sink in. At any moment a catastrophic event could fry our entire digital infrastructure in one fell swoop. Our civilization teeters on a house of cards as high as Mount Everest! To me this is the only reason the Screen Age should be frightening, but it’s very frightening indeed.

Turning now to sensation, Hayles mentions that touch and smell are suppressed by bipedalism:

“You could say when humans started to walk upright, we lost touch with the natural world. We lost an olfactory sense of the world, but obviously bipedalism paid big dividends.”

Note that bipedalism is associated with a loss of tactility, but it has also been correlated with enabling more complex manual dexterity. Maybe there is a general principle here that ambient tactile awareness is inversely correlated to prehension.

After a brief ensuing discussion of dualism and the advent of location-based services, we’re back to the gardens:

The difficulty, Harrison argues, is that we are losing something profoundly human, the capacity to connect deeply to our environments… “For the gardens to become fully visible in space, they require a temporal horizon that the age makes less and less room for.”

I like the point about a gardens’ time horizon. But it’s used to complain about the discomfort of our rushed lifestyle, which I would argue is separable from communication technology. The heads-buried-in-screens thing doesn’t really affect whether we have time for gardens.

An interesting footnote offered by Harrison is that the Czech playwright Karel Capek, who invented the word ‘robot,’ was a gardener.

Finally, this is the photo that accompanies the article:

…captioned, “Fingers on the political pulse.” The article is about looking and being present, but the picture is about hands, heatbeat, and hapticity.

(via Althouse)

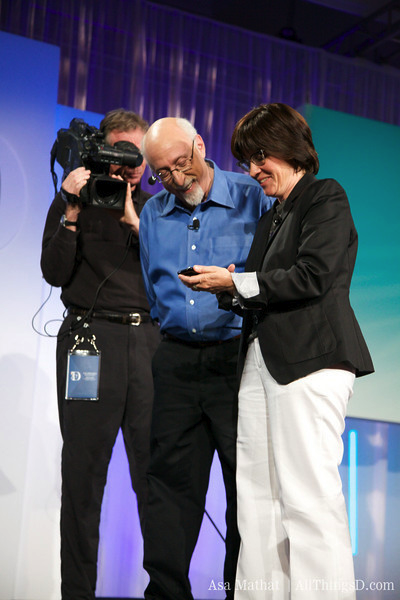

Full length video of All Things Digital

On 25, Jun 2009 | One Comment | In outreach | By David Birnbaum

The full length video of our presentation at the Wall Street Journal’s All Things Digital conference is now online!

Immersion mobile research in the Wall Street Journal

On 03, Jun 2009 | No Comments | In outreach | By David Birnbaum

But the wider deployment of haptic-enabled phones will open the door to new applications.

[Immersion] says that in the next nine months three mobile carriers will be launching applications it created that allow users to communicate emotions nonverbally. For example, frustration can be communicated by shaking the phone, which will create a vibration that will be felt by the other party. That person might then choose to respond with what the developers call a “love tap”, a rhythmic tapping on the phone that will produce a heartbeat-like series of vibrations on the other party’s phone.

Immersion’s general manager of touch business, Craig Vachon, says the next step is developing a phone that can deliver a physical sensation based on the position of a finger on a touch screen. One application would be a touch-screen keyboard that feels like a traditional keyboard…

“The technology is such that we could blindfold you and you would be able to feel the demarcation between the keys of a keypad, on a completely flat touch screen,” Mr. Vachon says.

Walt Mossberg smiles while he uses my demo

On 28, May 2009 | No Comments | In outreach | By David Birnbaum

My team, the Advanced Research Group at Immersion Corporation, had the extraordinary privilege to present our cutting edge designs on stage at the Wall Street Journal’s All Things Digital Conference. It’s so rewarding to see our top-secret hard work finally unveiled!

Here’s the highlight video posted on the All Things Digital website:

For more, read the full press release.

UPDATE:

Engadget: Immersion demos new TouchSense multitouch, haptic keyboard at D7.

Gizmodo: Immersion’s new haptic touchscreen tech encourages corny iPhone romance.

Electricpig: Multitouch tactile keyboard demoed

Bringing surface relief to mobile touchscreens

On 01, Dec 2008 | No Comments | In interfaces | By David Birnbaum

invisual is an interesting design concept: a tactile screen cover bundled with accompanying software which together form a mobile computing solution for the visually impaired. The silicone screen cover displays tactile symbols and icons, and the software places buttons behind the surface features. Nice photos at the link.

(via Engadget)

Vibrotactile Braille wireless phone

On 11, Apr 2008 | No Comments | In interfaces | By David Birnbaum

A blind Japanese professor has prototyped a wireless phone with an integrated vibrating Braille display:

A former teacher at a school for the blind and a professor from Tsukuba University of Technology have developed a cell phone that sends out vibrations representing Braille symbols to enable people with sight and hearing difficulties to communicate… When a caller pushes numbers on the keypad corresponding to Braille symbols, two terminals attached to the receiver’s phone vibrate at a specific rate to create a message.

Japanese Braille uses six dots to represent the Japanese syllabary. Using the numbers 1, 2, 4, 5, 7 and 8, on cell phones to represent these six dots, it’s possible to form Braille symbols. The developers are now working to make the devices that convert keypad information into vibrations smaller than their current size (16 centimeters by 10 centimeters). If vibration-based Braille is applied more widely, it may enable information to be “broadcast” to several blind people at once.

The idea of representing one bit of Braille with one cell phone key has a certain elegance, but I’m not sure how useful it would be. Readers of Braille are used to using their fingertips, not their entire palms. On the other hand, the article is so vague that I might not even be understanding what they’re up to.

(via Engadget)

Immersion’s mobile haptics demo

On 21, Mar 2008 | No Comments | In outreach | By Dave

A short demonstration of Immersion Corporation’s vibrotactile feedback system for touch screens:

The interviewer calls it a “genuinely remarkable technology.” My job right now is to develop vibrotactile applications for the phone in the video, so it makes me happy to see that this kind of thing is generating excitement!

Third party haptic keyboard for iPhone

On 02, Mar 2008 | No Comments | In code | By David Birnbaum

A downloadable haptic keyboard for the iPhone.

Totally missing the point, Gizmodo asks, “Does anyone care?”, noting that users are already accustomed to the iPhone’s non-tactile keyboard, and that this particular haptic keyboard is buggy. Whatever; research clearly indicates that vibrotactile feedback for surface keyboards enhances interaction. Kudos to these folks at the University of Glasgow for beginning an open project on a popular platform to develop this much-needed software.

Vibrating Bluetooth mobile peripherals

On 08, Feb 2008 | No Comments | In interfaces | By Dave

Recently a friend lamented the uselessness of vibration feedback for females, who tend to carry their mobile devices in a handbag rather than a pocket. Solution: a fashionable vibrating Bluetooth bracelet. The company that designed it also offers the gadget in a wristwatch form factor.

[via Boing Boing.]

UPDATE: Aformentioned friend notes that the bracelet is not, in fact, fashionable. My mistake!

Mobile haptics in The Economist

On 20, Mar 2007 | No Comments | In interfaces | By Dave

The print edition of The Economist came out with an interesting article on mobile haptics last week, which is now available online. It points out that the iPhone lacks what little haptic feedback a normal phone provides in favor of a touch screen, and notes that Samsung’s SCH-W559, not yet available in North America, will utilize an active haptic display. That product uses Immersion’s VibeTonz technology, which I haven’t felt. Although the vibration signal is said to be “very precise,” I find vibration motors to be heavy and bulky and always having a weaker transient response and resolution than other actuation methods. But the first generation of mobile haptics is already getting by with unbalanced motors, so it seems to make some sense to try to refine them until other actuators hit the market.

The article also interviews Vincent Hayward of McGill University’s Center for Intelligent Machines who has been developing skin stretching techniques to simulate tactile stimuli normal to the contact area. I saw him present his “THMB” system at the Enactive conference in Montreal, and it looked damn cool. (“Looked” not “felt,” because again… no demo. Maybe I’ll make an appointment to walk over there one of these days and check it out.) It’s a MEMS, positioned on the device to be felt by the tip of the thumb (essentially the same place as Sony’s scroll wheel).

Speaking of which, I recently read about Sony’s own moble vibrotactile platform, which it calls the TouchEngine—an extremely thin vibration actuator made out of piezoelectric film. But it’s not for the thumbtip; it’s installed on the back of the device, and sits in contact with the user’s palm.