language

Learning nouns activates separate brain region from learning verbs

On 11, Aug 2011 | No Comments | In cognition, language, neuroscience | By Dave

Another MRI study, this time investigating how we learn parts of speech:

The test consisted of working out the meaning of a new term based on the context provided in two sentences. For example, in the phrase “The girl got a jat for Christmas” and “The best man was so nervous he forgot the jat,” the noun jat means “ring.” Similarly, with “The student is nising noodles for breakfast” and “The man nised a delicious meal for her” the hidden verb is “cook.”

“This task simulates, at an experimental level, how we acquire part of our vocabulary over the course of our lives, by discovering the meaning of new words in written contexts,” explains Rodríguez-Fornells. “This kind of vocabulary acquisition based on verbal contexts is one of the most important mechanisms for learning new words during childhood and later as adults, because we are constantly learning new terms.”

The participants had to learn 80 new nouns and 80 new verbs. By doing this, the brain imaging showed that new nouns primarily activate the left fusiform gyrus (the underside of the temporal lobe associated with visual and object processing), while the new verbs activated part of the left posterior medial temporal gyrus (associated with semantic and conceptual aspects) and the left inferior frontal gyrus (involved in processing grammar).

This last bit was unexpected, at first. I would have guessed that verbs would be learned in regions of the brain associated with motor action. But according to this study, verbs seem to be learned only as grammatical concepts. In other words, knowledge of what it means to run is quite different than knowing how to run. Which makes sense if verb meaning is accessed by representational memory rather than declarative memory.

Audio analysis of baby cries can differentiate “normal” fussiness from pain

On 10, Aug 2011 | No Comments | In language | By Dave

I’m a new parent of twin boys, and I could really use something like this. But it would be even better if the algorithm could break down the “normal” cries into specific needs. Mr. Nagashima, you are doing God’s work; faster, please.

Skin receptors may contribute to emotion

On 02, Jan 2010 | No Comments | In language, neuroscience, perception, physiology | By Dave

Interoception, the perception of internal feelings, is a funny thing. From our point of view as feeling beings, it seems entirely distinct from exteroceptive channels (sight, hearing, and so on). Interoception is also thought to be how we feel emotions, in addition to bodily functions. When you feel either hungry or lovesick, you are perceiving the state of your internal body, organs, and metabolism. A few years ago it was discovered that there are neural pathways for interoception distinct from ones used to perceive the outside world.

Interesting new research suggests that mechanical skin disturbances caused by pulsating blood vessels may significantly contribute to your perception of your own heartbeat. This is important because it means that skin may play a larger role in emotion than has been previously thought.

The researchers found that, in addition to a pathway involving the insular cortex of the brain — the target of most recent research on interoception — an additional pathway contributing to feeling your own heartbeat exists. The second pathway goes from fibers in the skin to most likely the somatosensory cortex, a part of the brain involved in mapping the outside of the body and the sense of posture.

This sounds surprising at first, but it makes perfect sense. There have been other instances where the functionality of perceptual systems overlap. For example, it’s been found that skin receptors contribute to kinesthesia: as the joints bend, sensations of skin stretch are used to perceive of joint angles. This was also somewhat surprising at the time, because it was thought that perception of one’s joint angles arose out of the receptors in the joints themselves, exclusively. The same phenomenon, of skin movement being incidentally involved in some other primary action, is at work here. We might be able to say that any time the skin is moved perceptibly, cutaneous signals are bound up with the percept itself.

In fact, I think this may be a good object lesson in how words about feelings can be very confusing. A few years ago, before the recent considerable progress in mapping the neural signature of interoception, the word ‘interoception’ was used to describe a class of perceptions—ones whose object was the perceiver. Interoception meant the perception of bodily processes: heartbeat, metabolic functioning, and so on. When scientists discovered a neural pathway that serves only this purpose, the word suddenly began to refer not to the perceptual modality, but exclusively to that neural pathway. Now that multiple pathways have been identified, the word will go back to its original meaning: a class of percepts, rather than a particular neural conduit.

“And reaching up my hand to try, I screamed to feel it touch the sky.”

On 22, Dec 2009 | No Comments | In art, language, tactility | By Dave

Check out this beautiful kinetic typography piece by Heebok Lee:

It’s based on an excerpt of the poem “Renascence” by Edna St. Vincent Millay.

- renascence

- noun

- 1. the revival of something that has been dormant.

- 2. another term for ‘renaissance.’

- (Oxford English Dictionary)

Millay, who wrote the poem when she was only 20 years old, originally called it “Renaissance.” It’s interesting that the two words are so close in meaning and are pronounced almost the same way, but they’re not considered alternate spellings of the same word.

|

Click below to read the poem in its entirety. I highly recommend reading the whole thing.

Read more…

The meaning of ‘most’

On 03, Dec 2009 | No Comments | In language | By Dave

William Shakespeare, who knew a thing or two about words, advised that “An honest tale speeds best, being plainly told.” But the exact meaning of plain language isn’t always easy to find. Even simple words like “most” and “least” can vary greatly in definition and interpretation, and are difficult to put into precise numbers.

Until now.

Thrilling!

In a groundbreaking new linguistic study, Prof. Mira Ariel of Tel Aviv University’s Department of Linguistics has quantified the meaning of the common word “most.” [The study] “is quite shocking for the linguistics world,” she says.

“I’m looking at the nature of language and communication and the boundaries that exist in our conventional linguistic codes,” says Prof. Ariel. “If I say to someone, ‘I’ve told you 100 times not to do that,’ what does ’100 times’ really mean? I intend to convey ‘a lot,’ not literally ’100 times.’ Such interpretations are contextually determined and can change over time.”

I’ve noticed that I exaggerate modally—I choose a number and run with it for a while. Currently it’s 5, as in, “I’ve told you 50 times; I had to wait for five hours.” I don’t mean some specific number, I just mean to use it as a placeholder for exaggeration purposes. There must be a term for this. Linguists?

When people use the word “most,” the study found, they don’t usually mean the whole range of 51-99%. The common interpretation is much narrower, understood as a measurement of 80 to 95% of a sample — whether that sample is of people in a room, cookies in a jar, or witnesses to an accident.

So many problems are caused when we try to communicate with words about whose meaning we think we agree when actually we don’t agree at all. But Professor Mira Ariel is helping sort it out by empirically determining what it is that we mean. Wittgenstein showed that the meaning of words cannot extend beyond how they’re used. So empirical studies like this one can help us immensely. I’m betting this kind of research will also help artificial intelligence research.

“‘Most’ as a word came to mean “majority” only recently. Before democracy, we had feudal lords, kings and tribes, and the notion of “most” referred to who had the lion’s share of a given resource — 40%, 30% or even 20%,” she explains. “Today, ‘most’ clearly has come to signify a majority — any number over 50 out of a hundred. But it wasn’t always that way. A two-party democracy could have introduced the new idea that ‘most’ is something more than 50%.”

I can’t tell from this short article whether Professor Ariel has done research to support her assertion that modern democracy really is the source for the lexical definition of “most” as meaning between 51% and 100%. But if true it’s pretty interesting because it shows that the word “most” may be political—that is, an expression of power or authority—rather than geometrical or mathematical, which is what I had always assumed.

Here’s the full article.

Questions

On 02, Dec 2009 | No Comments | In language | By Dave

Over dinner last night my friends and I got into a heated discussion about how to respond when someone asks a question that entails a rhetorical agenda. When a person on the street says, “Do you have any change?”, it poses a problem because you don’t want to lie as a matter of principle (because you have some) and you don’t want to tell the truth out of consideration for politeness and personal safety (because you have no intention of handing it over). Needless to say, we didn’t figure anything out.

The conversation reminded me of this scene from one of my favorite movies, Rosencrantz & Guildenstern Are Dead.

Gestures and words are neurologically similar

On 13, Nov 2009 | No Comments | In gesture, language, neuroscience | By Dave

Current thinking in the study of language is that, like a smart search engine that pops up the most suitable Web site at the top of its search results, the posterior temporal region serves as a storehouse of words from which the inferior frontal gyrus selects the most appropriate match. The researchers suggest that, rather than being limited to deciphering words alone, these regions may be able to apply meaning to any incoming symbols, be they words, gestures, images, sounds, or objects.

It doesn’t surprise me that a widely held theory of language is based on our understanding of how search engines work, because we tend to conceptualize our world with metaphors based on technology. But this suggests that many of our abstract theories might be pinned to planned obsolescence schedules, which is kind of amusing.

The post where I coin the word “intersthesia”

On 01, Oct 2009 | No Comments | In language | By David Birnbaum

I’ve been thinking a lot lately about Merleau-Ponty’s claim that we experience transcendent things synesthetically. As I noted in this post, he says that we experience overlapping, multimodal layers of sensations that overflow beyond our sensory limits, and that the things comprising the layers transcend us (are independent of us, do not require us in order to exist—at least, that’s our experience).

But to me, synesthesia is the wrong word to describe overlapping, multimodal sensations. Overlapping means that there are seperable sensations, with different envelopes, that may co-occur. But the way I experience synesthesia is quite different. When I hear a loud, unexpected noise, it brings the sensation of bright color. Smells have texture. Flashing lights have an auditory rhythm. These combinations aren’t the result of exploration over time, and they don’t overflow. They aren’t like a coffee cup which can be seen, smelled, and touched at the same time. Synesthesia is self-contained. Synesthesia is integrated.

It’s pretty clear to me that Merleau-Ponty’s idea of synesthesia and the widely documented neurological condition by the same name are different phenomena. Therefore I think it’s important to use a different word to refer to each. So for the phenomenon that Merleau-Ponty is identifying, I say “intersthesia” is more apt. It better implies overlapping layers of sensation that come and go continuously, in multiple dimensions. With this new word, we can say that we are all intersthetic all the time, and some of us are synesthetic some of the time.

Con-fusion

On 27, Sep 2009 | No Comments | In art, language | By David Birnbaum

I recently posted a review of the book Merleau-Ponty’s Philosophy by Lawrence Hass. On two occasions Hass broke up a familiar word with a hyphen in order to make the word’s etymology more obvious. The first was “organ-ize,” which I posted about here. He pulled the same trick when writing “con-fusion”:

[The solution to a problem] doesn’t merely restate what is already given, but rather demands “crystallizing insight” through which some meaning-possibility suddenly “reorganizes” and “synchronizes” what was before a con-fusion of meaning, a problem to be solved. (l. 2285)

So what does it mean to be “confused”?

adj. 1a. being perplexed or disconcerted. 1b. disoriented with regard to one’s sense of time, place, or identity. 2. indistinguishable. 3. being disordered or mixed up (Merriam-Webster)

The history of the word confuse is, in a word, confused…. [The verb] confuse was derived c.1550, with the literal sense “mix or mingle things so as to render the elements indistinguishable.” In the active, figurative sense of “discomfit in mind or feeling,” confuse is only recorded from 1805. This activity could have been expressed before that by native constructions like dumbfound and flabbergast, or by confound. (Online Etymology Dictionary)

In the Wikipedia entry for Mental Confusion, someone has posted this image, in which there are indeed two distinct elements that are intermingled so as to render each one harder to distinguish:

Organ-ize

On 03, Sep 2009 | One Comment | In cognition, language, music | By David Birnbaum

I was inspired to research the words “organ” and “organized” after I read a statement made by Merleau-Ponty scholar Lawrence Hass that “perceptions are organized (

Here’s a typical definition of organize:

- v. arrange in an orderly way

- v. to make into a whole with unified and coherent relationships (yourdictionary.com)

These definitions aren’t satisfying. What makes an organization orderly, unified, and coherent? The definition Hass implies is much more illuminating: to be organized is to be divided according to the sense organs of a perceiver. Now we’re getting somewhere!

But moving in a slightly different direction, what the hell are we doing playing a musical instrument called an “organ”? And what does all this mean for Edgard Varèse’s famous definition of music as “organized sound”?

organ

- n. from the Greek organon meaning “implement”, “musical instrument”, “organ of the body”, literally, “that with which one works” (Online Etymology Dictionary)

- n. an instrument or means, as of action or performance

(Dictionary.com)

Substituting “organ” in Varèse’s famous definition with these, the word “music” means:

- music is sound with which one works

- music is sound that is a means of action or performance

For the first time I understand what Varèse meant when he said music is “organized sound.” We use the word music to mean sound that is utilized by someone to work or perform. Nothing more, nothing less.

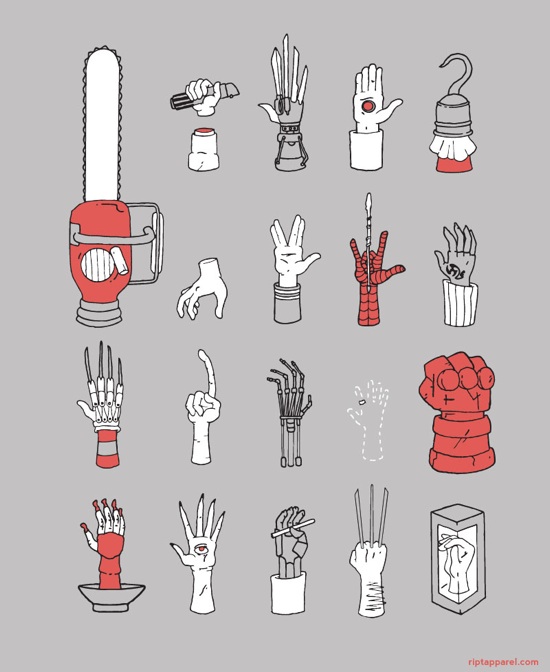

Legerdemain

On 10, Aug 2009 | No Comments | In language | By David Birnbaum

n. a display of skill or adroitness (Merriam-Webster)

n. sleight of hand; hence, any artful deception or trick (Webster’s Revised Unabridged Dictionary)

n. lightness of hand, from French: léger (light, nimble) + de (of) + main (hand) (yourdictionary.com)

Mimesis

On 23, Apr 2009 | No Comments | In language | By David Birnbaum

n. The imitation or representation of aspects of the sensible world, especially human actions, in literature and art. (American Heritage Dictionary, 4th ed.)

Biology. The close external resemblance of an organism, the mimic, to some different organism, the model, such that the mimic benefits from the mistaken identity, as seeming to be unpalatable or harmful. (Dictionary.com)

More from Encyclopedia Britannica Online:

Basic theoretical principle in the creation of art. The word is Greek and means “imitation” (though in the sense of “re-presentation” rather than of “copying”). Plato and Aristotle spoke of mimesis as the re-presentation of nature. According to Plato, all artistic creation is a form of imitation: that which really exists (in the “world of ideas”) is a type created by God; the concrete things man perceives in his existence are shadowy representations of this ideal type. Therefore, the painter, the tragedian, and the musician are imitators of an imitation, twice removed from the truth. Aristotle, speaking of tragedy, stressed the point that it was an “imitation of an action”—that of a man falling from a higher to a lower estate. Shakespeare, in Hamlet’s speech to the actors, referred to the purpose of playing as being “…to hold, as ’twere, the mirror up to nature.” Thus, an artist, by skillfully selecting and presenting his material, may purposefully seek to “imitate” the action of life.

The place I recently spotted the word was in the book The Hand by Frank Wilson, which I will be posting about shortly. Wilson discusses Origins of the Modern Mind by Merlin Donald, and quotes this passage:

Mimetic skill or mimesis rests on the ability to produce conscious, self-initiated, representational acts that are intentional, but not linguistic…. Mimesis is fundamentally different from imitation and mimicry in that it involves invention of intentional representations….Mimetic skill results in the sharing of knowledge without every member of the group having to reinvent that knowledge….The primary form of mimetic expression was, and continues to be, visuomotor. The mimetic skills basic to child-rearing, toolmaking, cooperative gathering and hunting, the sharing of food and other resources, finding, constructing, and sharing shelter, and expressing social hierarchies and custom would have involved visuomotor behavior. (Donald, pp. 169-177, quoted in Wilson, p. 48)

Latency

On 16, Apr 2009 | No Comments | In language | By David Birnbaum

n. 1. Computers. The time required to locate the first bit or character in a storage location, expressed as access time minus word time. 2. Physiology. The interval between stimulus and reaction. 3. The state of being not yet evident or active.

(via Dictionary.com)

Fremitus

On 14, Feb 2009 | No Comments | In language, medical | By David Birnbaum

n. a palpable vibration on the human body.

- Hepatic fremitus is a vibration felt over a person’s liver. It is thought to be caused by a severely inflamed and necrotic liver rubbing up against the peritoneum.

- Hydatid fremitus is a vibratory sensation felt on palpating a hydatid cyst.

- Pericardial fremitus is a vibration felt on the chest wall due to the friction of the surfaces of the pericardium over each other.

- Periodontal fremitus occurs in either of the alveolar bones when an individual sustains trauma from occlusion. It is a result of teeth exhibiting at least slight mobility rubbing against the adjacent walls of their sockets, the volume of which has been expanded ever so slightly by inflammatory responses, bone resorption or both.

- Pleural fremitus is a palpable vibration of the wall of the thorax caused by friction between the parietal and visceral pleura of the lungs.

- Rhonchal fremitus, also known as bronchial fremitus, is a palpable vibration produced during breathing caused by partial airway obstruction.

- Subjective fremitus is a vibration felt by a person who hums with the mouth closed.

- Tussive fremitus is a vibration felt on the chest when a person coughs.

- Vocal Fremitus, also called pectoral fremitus, or tactile vocal fremitus, is a vibration felt on a person’s chest during low frequency vocalization.

(via Wikipedia)

Facial movement affects hearing

On 05, Feb 2009 | No Comments | In language, perception | By David Birnbaum

The movement of facial skin and muscles around the mouth plays an important role not only in the way the sounds of speech are made, but also in the way they are heard… “How your own face is moving makes a difference in how you ‘hear’ what you hear,” said first author Takayuki Ito, a senior scientist at Haskins.

Note that this sentence says that facial movement doesn’t affect what you hear, it only affects how you “hear” what you hear. More on this below.

When, Ito and his colleagues used a robotic device to stretch the facial skin of “listeners” in a way that would normally accompany speech production they found it affected the way the subjects heard the speech sounds.The subjects listened to words one at a time that were taken from a computer-produced continuum between the words “head” and “had.” When the robot stretched the listener’s facial skin upward, words sounded more like “head.” With downward stretch, words sounded more like “had.” A backward stretch had no perceptual effect.

And, timing of the skin stretch was critical—perceptual changes were only observed when the stretch was similar to what occurs during speech production.

These effects of facial skin stretch indicate the involvement of the somatosensory system in the neural processing of speech sounds. This finding contributes in an important way to our understanding of the relationship between speech perception and production. It shows that there is a broad, non-auditory basis for “hearing” and that speech perception has important neural links to the mechanisms of speech production.

“Listeners,” “hearing”… Why do I worry so much about these damn quotation marks? Because they point out an assumption we tend to make about perception: that there are objective sense data out there in the world, ready to be accessed through our senses. Within this model, secondary effects (caused by face pulling robots) are seen as tricks played on our minds. But this is backwards. The astounding implication of this research is that our minds are composed of these tricks; the tricks are what produce a stable reality that meets our expectations.

For example, when the researchers were listening to recordings of the words “had” and “head” in order to design their experiment, the shape of their faces must have affected their hearing. (At least, that’s what their research seems to imply.) So who can listen without “listening”? Who determines whether the word is really “had” or “head”—someone without any facial expression at all?

The paper itself, which I haven’t read, can be purchased here.

Emotion, context, and email

On 11, Dec 2008 | No Comments | In language, sociology | By David Birnbaum

Researchers at the University of Chicago are studying how people express social context in emails through the use of emoticons, subject lines, and signatures. Their findings, published in the American Journal of Sociology, indicate that people must develop new communication strategies to write emails. “People can cultivate ways of communicating in online contexts that are equally as effective as those used offline,” write the researchers. “The degree to which individuals develop unique conventions in the medium will determine their ability to communicate effectively.”

(via Science Daily)

Prestidigitation

On 14, Nov 2008 | No Comments | In language | By David Birnbaum

n. Manual dexterity in the execution of tricks.

(via WordNet)

Deliquescent

On 28, Sep 2008 | No Comments | In language | By David Birnbaum

adj. 1. Tending to melt or dissolve into thin air. 2. Continuously branching ever smaller, as the limbs of a tree.

(via alphaDictionary)

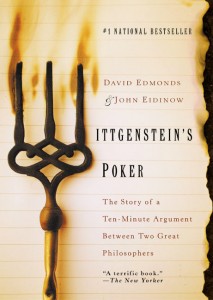

Philosopher deathmatch, and how words are like tools

On 07, Sep 2008 | No Comments | In books, language, tactility | By David Birnbaum

I just finished reading Wittgenstein’s Poker. From the jacket:

I just finished reading Wittgenstein’s Poker. From the jacket:

In October 1946, philosopher Karl Popper arrived at Cambridge to lecture at a seminar hosted by his legendary colleague Ludwig Wittgenstein. It did not go well: the men began arguing, and eventually, Wittgenstein began waving a fire poker toward Popper. It lasted scarcely 10 minutes, yet the debate has turned into perhaps modern philosophy’s most contentious encounter, largely because none of the eyewitnesses could agree on what happened. Did Wittgenstein physically threaten Popper with the poker? Did Popper lie about it afterward?

The authors provide a comprehensive biographical and historical context for the incident, and use it as a springboard into the two men’s respective philosophies. It’s an enjoyable look at two self-important, short-tempered intellectuals and their rivalry.

As I mentioned in this post, I find Wittgenstein’s philosophy of language often invokes touch themes. In the following excerpt from Poker (originating from one of his lectures), Wittgenstein makes a point about a colleague’s statement, “Good is what is right to admire,” utilizing a haptic metaphor:

The definition throws no light. There are three concepts, all of them vague. Imagine three solid pieces of stone. You pick them up, fit them together and you now get a ball. What you’ve now got tells you something about the three shapes. Now consider you have three balls of soft mud or putty. Now you put the three together and mold out of them a ball. Ewing makes a soft ball out of three pieces of mud. (68)

Another example stems from Wittgenstein’s midlife change in philosophical outlook. In his first publication, the Tractatus Logicio-Philosophicus, he was preoccupied with the “picture theory of language”—the idea that sentences describe “states of affairs” that can be likened to the contents of a picture. Later, he developed a theory of language based on words as tools for conveying meaning. In my reading, he shifted from a vision-based to a haptic-based (in fact, a distinctly physical-interaction-based) understanding of how language works.

The metaphor of language as a picture is replaced by the metaphor of language as a tool. If we want to know the meaning of a term, we should not ask what it stands for: we should instead examine how it is actually used. If we do so, we will soon recognize that there is no underlying single structure. Some words, which at first glance look as if they perform similar functions, actually operate to distinct sets of rules. (229)

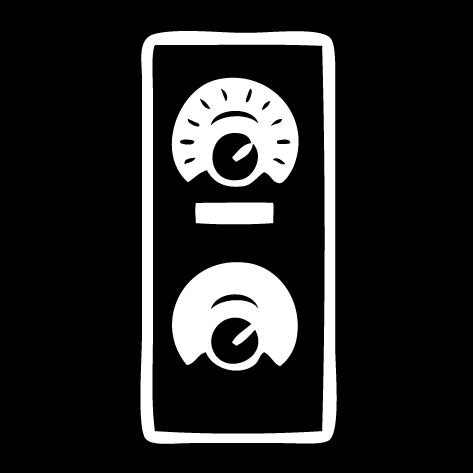

Here’s the relevant passage directly from Philisophical Investigations:

It is like looking into the cabin of a locomotive. We see handles all looking more or less alike. (Naturally, since they are all supposed to be handled.) But one is the handle of a crank which can be moved continuously (it regulates the opening of a valve); another is the handle of a switch, which has only two effective positions, it is either off or on; a third is the handle of a brake-lever, the harder one pulls on it, the harder it brakes; a fourth, the handle of the pump: it has an effect only so long as it is moved to and fro. (PI, I, par. 12)

Words as the physical interface to meaning. Love it!