philosophy

The post where I coin the word “intersthesia”

On 01, Oct 2009 | No Comments | In language | By David Birnbaum

I’ve been thinking a lot lately about Merleau-Ponty’s claim that we experience transcendent things synesthetically. As I noted in this post, he says that we experience overlapping, multimodal layers of sensations that overflow beyond our sensory limits, and that the things comprising the layers transcend us (are independent of us, do not require us in order to exist—at least, that’s our experience).

But to me, synesthesia is the wrong word to describe overlapping, multimodal sensations. Overlapping means that there are seperable sensations, with different envelopes, that may co-occur. But the way I experience synesthesia is quite different. When I hear a loud, unexpected noise, it brings the sensation of bright color. Smells have texture. Flashing lights have an auditory rhythm. These combinations aren’t the result of exploration over time, and they don’t overflow. They aren’t like a coffee cup which can be seen, smelled, and touched at the same time. Synesthesia is self-contained. Synesthesia is integrated.

It’s pretty clear to me that Merleau-Ponty’s idea of synesthesia and the widely documented neurological condition by the same name are different phenomena. Therefore I think it’s important to use a different word to refer to each. So for the phenomenon that Merleau-Ponty is identifying, I say “intersthesia” is more apt. It better implies overlapping layers of sensation that come and go continuously, in multiple dimensions. With this new word, we can say that we are all intersthetic all the time, and some of us are synesthetic some of the time.

Merleau-Ponty’s philosophy

On 24, Sep 2009 | 3 Comments | In books | By David Birnbaum

“Yes or no: do we have a body—that is, not a permanent object of thought, but a flesh that suffers when it is wounded, hands that touch?” — The Visible and the Invisible

Merleau-Ponty’s Philosophy by Lawrence Hass was the first full book I read on the great phenomenologist. If you’re fascinated by sensation, perception, synesthesia, metaphor, and flesh (and frankly, who isn’t?), please read it! It offers many wonderful revelations. I’ll briefly review the following topics from the book:

- Sensation/perception is a false dichotomy.

- Perception is “contact with otherness.”

- Synesthesia is a constant feature of experience.

- The concepts of “reversibility” and “flesh”

Tactility in the Tractatus Logico-Philisophicus

On 01, Jul 2009 | No Comments | In books | By David Birnbaum

I’ve written before about the later writings of Wittgenstein and the metaphor of the word as a manual tool. However, in Ludwig’s first published work, the Tractatus Logico-Philosophicus, his theory of language is that sentences represent states of affairs, the so-called picture theory of language. Although he later abandoned that viewpoint for the tool-based one, I was intrigued by this historically significant switch-up, so I read through the Tractatus with special attention to its visual and tactile metaphors. Here are a few examples.

2.013

Each thing is, as it were, in a space of possible states of affairs. This space I can imagine empty, but I cannot imagine the thing without the space.

2.0131

A spatial object must be situated in infinite space. (A spatial point is an argument-place.)

A speck in the visual field, though it need not be red, must have some colour: it is, so to speak, surrounded by colour-space. Notes must have some pitch, objects of the sense of touch some degree of hardness, and so on.

This is a prime example of a sensory metaphor used throughout the book. Objects are described in a visual way, as being seen as situated within a possibility space or belief space. They themselves have extension, but we perceive them as taking up some amount of the visual field (surrounded by context, which here is represented as other possibilities for their position or physical attributes).

2.151

Pictorial form is the possibility that things are related to one another in the same way as the elements of the picture.

2.1511

That is how a picture is attached to reality; it reaches right out to it.

2.1512

It is laid against reality like a measure.

2.15121

Only the end-points of the graduating lines actually touch the object that is to be measured.

Again we get a visual metaphor described in terms of physicality. How is a picture “attached” to reality? It reaches out to it. And while it touches reality, it only just touches it, in a tangential way. Wittgenstein starts with a visual image and then writes “attached”, “reaches out”, “laid against”, and “touch”—all haptic metaphors.

2.1514

The pictorial relationship consists of the correlations of the picture’s elements with things.

2.1515

These correlations are, as it were, the feelers of the picture’s elements, with which the picture touches reality.

Now we have moved from the picture as a “measure,” a passive geometry tool, to a picture as an agent. Not just any agent, but an agent with a capacity for haptic perception. What is a “feeler”? To me that word means a mobile extremity with sense organs which can be used to find out about the world. So, what Wittgenstein seems to be saying here is that when we generate a picture in our mind, it’s as if we are extending our hand into the world.

4.002

…

Language disguises thought. So much so, that from the outward form of the clothing it is impossible to infer the form of the thought beneath it, because the outward form of the clothing is not designed to reveal the form of the body, but for entirely different purposes.

In other words, thoughts are like physical objects. A word envelops a thought. We have a thought and then we toss a word-robe over it and shove it onto the stage of discourse where it can interface with other enrobed thoughts.

4.411

It immediately strikes one as probable that the introduction of elementary propositions provides the basis for understanding all other kinds of proposition. Indeed the understanding of general propositions palpably depends on the understanding of elementary propositions.

Once again, a tactile metaphor (“palpably”) is used for emphasis and to indicate comprehensive understanding.

5.557

…

What belongs to its application, logic cannot anticipate.

It is clear that logic must not clash with its application.

But logic has to be in contact with its application.

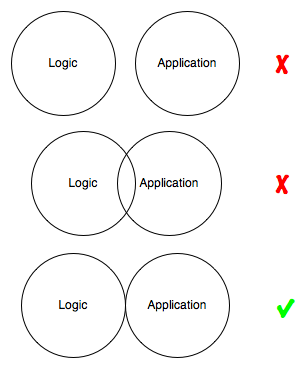

Therefore, logic and its application must not overlap.

I.e.,

Is “overlap” a haptic metaphor or a visual one? It could be either, or both.

6.432

How things are in the world is a matter of complete indifference for what is higher. God does not reveal himself in the world.

6.4321

The facts all contribute only to setting the problem, not to its solution.

6.44

It is not how things are in the world that is mystical, but that it exists.

6.45

To view the world sub specie aeterni is to view it as a whole—a limited whole.

Feeling the world as a limited whole—it is this that is mystical.

To feel is to know, silently, mystically.

I was pretty surprised at how easy it seems to foresee Wittgenstein’s turn from the eye to the hand. He presents what he calls a picture theory of language, but it repeatedly leads to a description of solid objects in space, or bodies moving and feeling and contacting each other. Of course I’m reading with a very biased perspective. Not only is my goal to hunt for tactile metaphors but I also know how the story ends some 30 years later. Still, I can’t help but feel that the tactile language in the Tractatus may foreshadow the shift to come.

Enactive perception

On 27, Feb 2008 | No Comments | In books | By David Birnbaum

I just finished Action In Perception by Alva Noë. It’s a very readable introduction to the enactive view of perceptual consciousness, which argues that perception neither happens in us nor to us; rather, it’s something we do with our bodies, situated in the physical world, over time. Our knowledge of the way in which sensory stimulation varies as we control our bodies is what brings experience about. Without sensorimotor skill, a stimulus cannot constitute a percept. Noë presents empirical evidence for his claim, drawing on the phenomenology of change blindness as well as tactile vision substitution systems. I highly recommend the book.

The emphasis on embodied experience leads to the use of touch as a model for perception, rather than the traditional vision-based approach. Here’s an excerpt:

Touch acquires spatial content—comes to represent spatial qualities—as a result of the ways touch is linked to movement and to our implicit understanding of the relevant tactile-motor dependencies governing our interaction with objects. [Philosopher George Berkeley] is right that touch is, in fact, a kind of movement. When a blind person explores a room by walking about in it and probing with his or her hands, he or she is perceiving by touch. Crucially, it is not only the use of the hands, but also the movement in and through the space in which the tactile activity consists. Very fine movements of the fingers and very gross wanderings across a landscape can each constitute excercises of the sense of touch. Touch, in all such cases, is movement. (At the very least, it is movement of something relative to the perceiver.) These Berkeleyan ideas form a theme, more recently, in the work of [Brian O'Shaughnessy's book "Consciousness and World"]. He writes: “touch is in a certain respect the most important and certainly the most primordial of the senses. The reason is, that it is scarely to be distinguished from the having of a body that can act in physical space”…But why hold that touch is the only active sense modality? As we have stressed, the visual world is not given all at once, as in a picture. The presence of detail consists not in its representation now in consciousness, but in our implicit knowledge now that we can represent it in consciousness if we want, by moving the eyes or by turning the head. Our perceptual contact with the world consists, in large part, in our access to the world thanks to our possession of sensorimotor knowledge.

Here, no less than in the case of touch, spatial properties are available due to links to movement. In the domain of vision, as in that of touch, spatial properties present themselves to us as “permanent possibilities of movement.” As you move around the rectangular object, its visible profile deforms and transforms itself. These deformations and transformations are reversible. Moreover, the rules governing the transformation are familiar, at least to someone who has learned the relevant laws of visuomotor contingency. How the item looks varies systematically as a function of your movements. Your experience of it as cubical consists in your implicit understanding of the fact that the relevant regularity is being observed.

Virtual and augmented reality interface design practices have already begun to demonstrate these concepts. Head mounted augmented reality displays sense the user’s eye and body movements to construct virtual percepts. Head related transfer functions (HRTFs) synthesize sound as it would be heard by an organism with certain physical characteristics, in a particular environment. It seems to me that an important implication for enactive interface design is that haptic sensory patterns can lead to perceptual experience in all sensory modes (vision, hearing, touch). Thus, all human-computer interaction/user experience can be viewed in a haptic context.