scare quotes

Perceptual chauvinism

On 11, Jan 2010 | No Comments | In medical, music, perception | By Dave

I read two articles in a row today that use unnecessary quotation marks, which expose that strange discomfort with writing about touch I have written about before. As humans we hold our feelings dear, so we don’t like to say that any other beings can feel. Especially plants, for chrissake:

Plants are incredibly temperature sensitive and can perceive changes of as little as one degree Celsius. Now, a report shows how they not only “feel” the temperature rise, but also coordinate an appropriate response—activating hundreds of genes and deactivating others; it turns out it’s all about the way that their DNA is packaged.

The author can’t simply say that plants can feel, so instead he writes “feel,” indicating a figurative sense of the word. Why? Because the word ‘feel’ implies some amount of consciousness. (In fact I have argued that ‘feeling’ signifies a baseline for the existence of a subject.) Only the animal kingdom gets feeling privileges.

And then, in another article posted on Science Daily, we have a similar example, but this one is even more baffling. The context is that research has shown that playing Mozart to premature infants can have measurable positive effects on development:

A new study… has found that pre-term infants exposed to thirty minutes of Mozart’s music in one session, once per day expend less energy—and therefore need fewer calories to grow rapidly—than when they are not “listening” to the music…

In the study, Dr. Mandel and Dr. Lubetzky and their team measured the physiological effects of music by Mozart played to pre-term newborns for 30 minutes. After the music was played, the researchers measured infants’ energy expenditure again, and compared it to the amount of energy expended when the baby was at rest. After “hearing” the music, the infant expended less energy, a process that can lead to faster weight gain.

Not allowing plants to feel is one thing. And I can even understand the discomfort with writing that newborns are listening to music, because that may imply they are attending to it, which is questionable. But why can’t human babies be said to hear music? This is the strangest case of perceptual chauvinism I have yet come across.

The Gray Ditz discovers augmented reality

On 04, Dec 2009 | No Comments | In interfaces | By Dave

I must speak up on this one. Recently in the New York Times Sunday magazine, Rob Walker wrote a foolish article about augmented reality. The first half deals with introducing augmented reality, the Avatar movie, and the Yelp app. But this is his description of the future of this incredible technology:

Core77, the online design magazine, suggested one amusing possibility earlier this year: fold in facial-recognition technology and you could point your phone at Bob from accounting, whose visage is now “augmented” with the information that he has a gay son and drinks Hoegaarden. More recently, a Swedish company has publicized a prototype app that would in fact augment the image of Bob (or whomever) with information from his social-networking profiles — and they aren’t kidding.

Your silly example wrecks the already floundering article, whose original purpose, I assume, was to inform us about an incoming technology. So how and why did you come up with the idea that Bob would be marked with a note saying his son is gay? It implies that augmented reality entails a violation of privacy, which it does not.

How about: “Fold in facial-recognition technology and you could point your phone at Bob from accounting and see him enwrapped in a digital ecosystem—video tattoos bloom across his body like Ray Bradbury’s Illustrated Man, while around his head swirls a halo of tweets, emotions, and memories. It may all be virtual, but the way you see him is augmented in a very real way.”

But instead of offering a creative example to show that the possibilities are endless, you make up an offensive scenario and then sarcastically write “they aren’t kidding,” which subtly attributes your idea to the people who are developing augmented reality. It’s dishonest.

If this sounds off-putting, it’s worth noting that most assessments of the augmented-reality trend include the speculation that the hype will fade.

So you’re trying to put us off to augmented reality, and then reassure us that we have nothing to worry about since it won’t happen anyway. Then why write about this topic in the first place? If it’s not news, and it’s not interesting, what’s the point? And, “most assessments” is weasely. If you’ve got the goods, link to them, or at least name your sources.

…Why just look at a restaurant, a colleague or the “Mona Lisa,” when you can you can “augment” them all?

The scare quotes around the word ‘augment’ make it sound like you’re uncomfortable with using the word; as if it’s jargon. Expand your horizons! You don’t need to use quotes every time you learn a new word!

I don’t mean to pick you, NYT, but your articles about new technologies are sometimes rather irritating. Instead of writing with genuine interest and optimism about exciting new trends, you project a cynicism that hints at fear and confusion just beneath the surface.

(via DUB For the Future)

Facial movement affects hearing

On 05, Feb 2009 | No Comments | In language, perception | By David Birnbaum

The movement of facial skin and muscles around the mouth plays an important role not only in the way the sounds of speech are made, but also in the way they are heard… “How your own face is moving makes a difference in how you ‘hear’ what you hear,” said first author Takayuki Ito, a senior scientist at Haskins.

Note that this sentence says that facial movement doesn’t affect what you hear, it only affects how you “hear” what you hear. More on this below.

When, Ito and his colleagues used a robotic device to stretch the facial skin of “listeners” in a way that would normally accompany speech production they found it affected the way the subjects heard the speech sounds.The subjects listened to words one at a time that were taken from a computer-produced continuum between the words “head” and “had.” When the robot stretched the listener’s facial skin upward, words sounded more like “head.” With downward stretch, words sounded more like “had.” A backward stretch had no perceptual effect.

And, timing of the skin stretch was critical—perceptual changes were only observed when the stretch was similar to what occurs during speech production.

These effects of facial skin stretch indicate the involvement of the somatosensory system in the neural processing of speech sounds. This finding contributes in an important way to our understanding of the relationship between speech perception and production. It shows that there is a broad, non-auditory basis for “hearing” and that speech perception has important neural links to the mechanisms of speech production.

“Listeners,” “hearing”… Why do I worry so much about these damn quotation marks? Because they point out an assumption we tend to make about perception: that there are objective sense data out there in the world, ready to be accessed through our senses. Within this model, secondary effects (caused by face pulling robots) are seen as tricks played on our minds. But this is backwards. The astounding implication of this research is that our minds are composed of these tricks; the tricks are what produce a stable reality that meets our expectations.

For example, when the researchers were listening to recordings of the words “had” and “head” in order to design their experiment, the shape of their faces must have affected their hearing. (At least, that’s what their research seems to imply.) So who can listen without “listening”? Who determines whether the word is really “had” or “head”—someone without any facial expression at all?

The paper itself, which I haven’t read, can be purchased here.

My hometown's newspaper puts those annoying quotes around the word "feel"

On 12, Nov 2008 | One Comment | In tactility | By David Birnbaum

The San Diego Union-Tribune recently published an article about haptics:

On one computer, users could “feel” the contours of a virtual rabbit.

Do users “feel” the contours of a virtual rabbit, or do they just feel them? Do we “read” text on the internet, or just read it? When we watch a movie do we “see” the actors? Harumph.

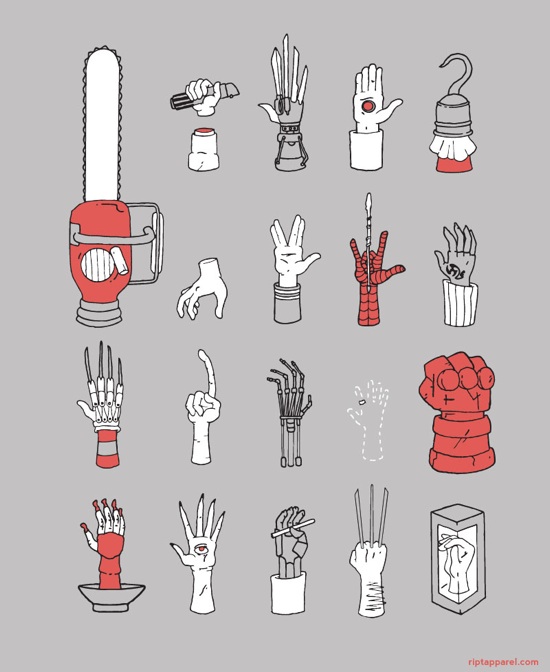

The article is about Butterfly Haptics, which is a haptic interface based on magnetic levitation. I “felt” it at SIGGRAPH ’08, and it was extraordinarily crisp and strong. The only problem is that the workspace (range of motion) is tiny compared to other haptic interfaces, and there doesn’t seem to be a clear development path for expanding the workspace using magnetic technology. Nevertheless, it’s great to be able to add magnetic field actuation to the relatively limited number of technologies that can be used for haptic display.