Towards a new conceptual framework for digital musical instruments

Input Devices and Music Interaction Laboratory

McGill University

Montreal, Canada

Abstract

This paper describes the adaptation of an existing model of human information processing for the categorization of digital musical instruments in terms of performance context and behavior. It further presents a visualization intended to aid the analysis of existing DMIs and the design of new devices. Three new interfaces constructed by the authors are examined within this framework to illustrate its utility.

1. Introduction

When considering and categorizing devices that produce sound, it is common to become entangled in the differentiation of instruments, musical toys, and installations. Even within categories, confusion arises: conceptual models of musical instruments vary according to historical, cultural, and personal biases. New musical devices have varying degrees of success in penetrating the conceptual boundary between instrument and non-instrument, and frequently their path into the instrument domain is unexpected from the perspective of design intentionality. The issue is further confused by a layer of artistic interpretation, exploding the possible definitions of ‘instrument’ to virtually any conceivable artifact that can involve sound (including the absence of sound). ‘Instrument’ can thus refer to a traditional acoustic device, a controller with no specific mapping, a software program that maps control input to musical output, or can be synonymous with a musical piece itself, in which the interface (including its physical component) is integrated with musical sound output in the composer’s expressive intent [1]. However, a systematic investigation of the design space of a musical device (such as dimension space analysis [2]) promotes an understanding of musical devices that considers both design goals and constraints arising from human capability and environmental conditions.

For the purposes of this investigation, the definition of ‘musical instrument’ will be restricted to refer to a sound-producing device that can be controlled by a variety of physical gestures and is reactive to user actions [3]. A digital musical instrument (DMI) implies a musical instrument with a sound generator that is separable (but not necessarily separate) from its control interface, and with musical and control parameters related by a mapping strategy [4]. While computers are an essential part of a system such as this, the representation of the computer as a symbolic, metaphorical machine generating function-relationships to which we interface sensor and feedback systems does not adequately articulate its role in problem-posing task domains such as music composition and performance.

It has been said that, with respect to computer music, the differentiation between computer and musical instrument is a misconception, and this is a problem that has its solution in interface design [5]. Indeed, a computer may be used to contain structural components of an instrument, or many instruments, whose limits are only defined in terms of the computer’s ability to implement known sound synthesis, signal processing, and interfacing methods. But if the ‘computer = instrument’ paradigm is used, it is likely to leave the impression that digital instruments are also general purpose tools, and that the freedom to change mapping and feedback parameters arbitrarily provides the player with a better musical tool. Instead, the computer can be more aptly viewed as a semiotic, connotative machine that hypothesizes design criteria rather than exclusively representing a priori interaction metaphors based on the cultural and personal experience of the user [6]. Understanding the computer in this way endows it a constitutive role in performance behaviors that are not guided by explicit intention and evaluation of feedback, and directs scrutiny toward a variety other factors.

Fields of research that have been applied to instrument analysis and development range from human-computer interaction [7], theories of design [8], music cognition and perception [9], organology [10], and artistic/musicological approaches [11], to name only a few. We propose another possible approach, tying together ideas from human-machine interaction and music performance practice by emphasizing the context of a musical performance.

2. A Human-Machine Interaction approach

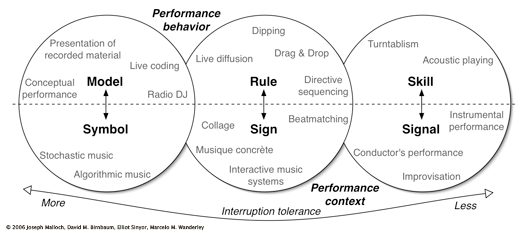

We have developed a paradigm of interaction and musical context based on Jens Rasmussen’s model of human information processing [12], previously used to aid DMI design in [13]. Rasmussen examines the functions of “man-made systems” and human interaction in terms of the user’s perception and the reasons (rather than causes) behind system design and human behavior. He describes interaction behaviors as being skill-, rule-, or knowledge-based. Rasmussen himself suggests that knowledge-based might be more appropriately called model-based, and we believe this term more clearly denotes this mode of behavior, particularly during performance of music, as “musical knowledge” can have various conflicting definitions.

Briefly, skill-based behavior is defined as a real-time, continuous response to a continuous signal, whereas rule-based behavior consists of the selection and execution of stored procedures in response to cues extracted from the system. Model-based behavior refers to a level yet more abstract, in which performance is directed towards a conceptual goal, and active reasoning must be used before an appropriate action (rule- or skill-based) is taken. Each of these modes is linked to a category of human information processing, distinguished by their human interpretation; that is to say, during various modes of behavior, environmental conditions are perceived as playing distinct roles, which can be categorized as signals, signs, and symbols. Figure 1 demonstrates our adaptation of Rasmussen’s framework, in which both performance behaviors and context are characterized as belonging to model/symbol, rule/sign, or skill/signal domains.

|

2.1. Skill-, Rule-, and Model-based musical performance

Skill-based behavior is identified by [8] as the mode most descriptive of musical interaction, in that it is typified by rapid, coordinated movements in response to continuous signals. Rasmussen’s own definition and usage is somewhat broader, noting that in many situations a person depends on the experience of previous attempts rather than real-time signal input, and that human behavior is very seldom restricted to the skill-based category. Usually an activity mixes rule- and skill-based behavior, and performance thus becomes a sequence of automated (skill-based) sensorimotor patterns. Instruments that belong to this mode of interaction have been compared more closely in several ways. The “entry-fee” of the device [5], allowance of continuous excitation of sound after an onset [9], and the number of musical parameters available for expressive nuance [14] may all be considered.

It is important to note that comparing these qualities does not determine the ‘expressivity’ of an instrument. ‘Expressivity’ is commonly used to discuss the virtue of an interaction design in absolute terms, yet expressive interfaces rely on the goals of the user and the context of output perception to generate information. Expression, a concept that is unquantifiable and dynamically subjective, cannot be viewed as an aspectual property of an interaction. Clarke, for example, is careful not to state that musical expressivity depends on the possession of a maximum or minimum number of expressive parameters; instead, he states that the range of choices available to a performer will affect performance practice [14]. A musician can perform expressively regardless of the choices presented, but must transfer her expressive nuance into different structural parameters and performance behavior modes. This relates to the HCI principle that an interface is not improved by simply adding more degrees of freedom (DOF); rather, at issue is the tight matching of the device’s control structure with the perceptual structure of the task [15].

During rule-based performance the musician’s attention is focused on controlling a process rather than a signal, responding to extracted cues and internal or external instructions. Behaviors that are considered to be quintessentially rule-based are typified by the control of higher-level processes and by situations in which the performer acts by selecting and ordering previously determined procedures, such as live sequencing, or using ‘dipping’ or ‘drag and drop’ metaphors [5]. Rasmussen describes rule-based behavior as goal-oriented, but observes that the performer may not be explicitly aware of the goal. Similar to the skill-based domain, interactions and interfaces in the rule-based area can be further distinguished by the rate at which a performer can effect change and by the number of task parameters available as control variables.

The model domain occupies the left side of the visualization, where the amount of control available to the performer (and its rate) is determined to below. It differs from the rule-based domain in its reliance on an internal representation of the task, thus making it not only goal-oriented but goal-controlled. Rather than performing with selections among previously stored routines, a musician exhibiting model-based behavior possesses only goals and a conceptual model of how to proceed. He must rationally formulate a useful plan to reach that goal, using active problem-solving to determine an effectual course of action. This approach is thus often used in unfamiliar situations, when a repertoire of rule-based responses does not already exist.

2.2. Signals, Signs and Symbols

By considering their relationship with the types of information described by Rasmussen, performance context can also be distributed among the interaction domains. The signal domain relates to most traditional instrumental performance, whether improvised or pre-composed, since its output is used at the signal-level for performance feedback. The sign domain relates to sequenced music, in which pre-recorded or predetermined sections are selected and ordered. Lastly, the symbol domain relates to conceptual music, which is not characterized by its literal presentation but rather the musical context in which it is experienced. In this case, problem-solving and planning are required—such as the in the case of conceptual scores, which may lack specific ‘micro-level’ musical instructions but instead consist of a series of broader directives or concepts that must be actively interpreted by the performer [16].

3. Using the visualization

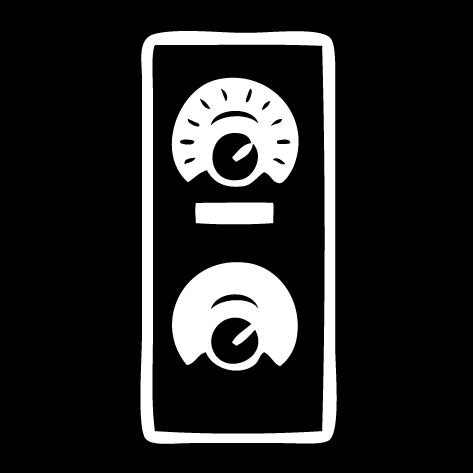

Consider the drum machine, for instance the Roland TR-808. To create a rhythm, the user selects a drum type using a knob, and then places the drum in a 16-note sequence by pressing the corresponding button(s). When the ‘start’ button is pressed, the sequence is played automatically at the selected tempo. Using the diagram, this is clearly a rule-based way to perform a rhythm. A skill-based example in a similar vein would be using a drum machine controlled by trigger pads that require the performer to strike the pads in real-time. Of course, a drum kit would be another obvious skill-based example. Using the same musical idiom but on the opposite end of the diagram we can consider using the live coding tool Chuck [17] to create the same rhythm. Here the performer would take a model-based approach: playing a beat would require breaking the task into sub-tasks, namely creating a loop and deciding on an appropriate rest interval based on the desired tempo.

4. Applications and implications

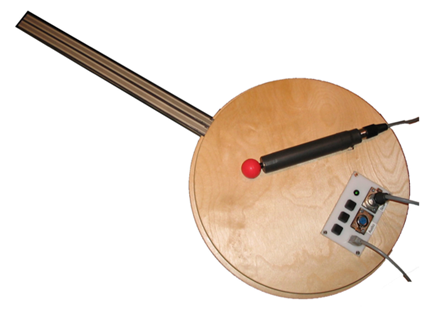

4.1 The Rulers

The Rulers, an interface developed by one of the authors at the Center for Computer Research in Music and Acoustics 2004 Summer Workshop, was designed to evoke the gesture of plucking or striking a ruler (or ‘tine’) that is fixed at one end. Utilizing infrared reflect sensors, the tines play one of seven percussive samples, slices extracted from the Amen breakbeat [18]. Each sample consists of either a single drum or cymbal, or a sequence of drums comprising a short rhythm. Because the samples contain sub-rhythms, the instrument must be played in the context of a global tempo set in the Max/MSP patch that remains fixed during the course of the performance. When plucked, each tine oscillates for a different amount of time; the sample it plays back has been assigned strategically, so that the length of sound output and physical oscillation are correlated. This provides an element of visual and passive haptic feedback to the player, as perceptual characteristics of the sound are tightly coupled to the physical construction of the interface. Output amplitude is determined by the amplitude of the tine’s oscillation, leading to control over the amplitude of initial excitation and damping—characteristics that classify it as an instrument that outputs musical events with a non-excited middle [9].

|

Playing the Rulers is principally a skill-based behavior, requiring constant performer input to sustain musical output. While it does not allow for continuous excitation, it does allow continuous modification after an onset, as the tines may be damped to affect the decay rate of musical events. Yet because the musical output contains fixed elements of rhythm over which the performer has no real-time control, the interaction is also directing short-time musical processes that do not originate from the player but are hard-wired into the instrument/system; it therefore incorporates elements of both the signal and sign domains.

4.2 The Celloboard

Another new interface, the Celloboard, was designed to tie sound output with continuous energy input from the performer. Using contact microphones and accelerometers to sense the amplitude, direction and pressure of bowing gestures, this controller allows the continuous excitation, as well as modification, of its sound. Pitch and timbral sound elements, created using scanned synthesis [19], are controlled by sensors on the controller’s neck, sensing position and pressure of touch on two channels, and also strain of the neck itself on one axis.

|

With its many continuously-controlled parameters and integral mapping, the Celloboard controller easily fits into the skill/signal domain. Any interruption in performance will immediately be audible since sound output requires constant bowing of the interface. It possesses a high ‘entry-fee’ for both sound excitation and modification, and does not easily allow high-level control of musical processes. Adaptations suggested by the framework might be to map the physical controls to a synthesis technique even less process-based than the present scanned synthesis implementation, or to allow the selection of discrete pitches (effectively lowering the modification entry-fee), in order to make the instrument more quickly mastered if a larger user-base is desired.

The Gyrotyre

The Gyrotyre [20] is a handheld bicycle wheel-based controller that uses a gyroscope sensor and a two-axis accelerometer to provide information about the rotation and orientation of the wheel. It was designed as a controller around a small group of mappings that would make use of the continuous motion data. We will look at two Gyrotyre mappings in order to place them in the framework.

|

In the first mapping, the interface controls playback of a sound file scrubbed backwards and forwards by spinning the wheel, evoking a turntable interface. The wheel may be spun very fast and then damped to achieve a descending glissando effect, or it may be kept spinning at a constant speed. This DMI (i.e., this particular mapping of the Gyrotyre controller) fits in the skill-based domain of the framework.

In an arpeggiator mapping, spinning the wheel while pressing one of the keys on the handle repeatedly cycles through a three-note arpeggio whose playback speed is directly correlated to the speed of the wheel. The performer changes the root note and the octave by tilting the Gyrotyre. In this case, performance behavior is predominantly rule-based. The musician reacts to signs, such as the current root note and the speed of the playback. The skill-based aspect of performance is the sustaining of a constant speed of rotation while holding a steady root-note position. Ostensibly, a performer could practice to develop these skills, but this would offer little advantage as the instrument outputs discrete, predetermined pitches. Considering musical context could lead to two changes: the mapping could be altered to reflect the required skill in the musical output, and/or the root-note selection method could be mapped to a gesture more appropriate for a rule-based behavior.

5. Conclusions

Our framework is intended to clarify some of the issues surrounding music interface design in several ways. Firstly, it may be used to analyze, compare, and contrast interfaces and instruments that have already been built, in order to facilitate an understanding of their relationships to each other. Additionally, the design of new

instruments can benefit from this description, whether the designer intends to start with a particular interface concept or wishes to work within a specific musical context. Finally, it may be useful for adapting existing DMIs to different musics, or to increase their potential for performance within a specific musical context.

6. Acknowledgments

The authors would like to thank Ian Knopke, Doug Van Nort, and Bill Verplank. The first author received research funds from the Center for Interdisciplinary Research in Music Media and Technology, and the last author received funding from an NSERC discovery grant.

7. References

[1] Schnell, N., Battier, M. Introducing composed instruments, technical and musicological implications. In Proceedings of the International Conference on New Instruments for Musical Expression, pp. 138–142. Dublin, Ireland, 2002.

[2] Birnbaum, D. M., Fiebrink, R., Malloch, J., Wanderley, M. M. Towards a dimension space for musical devices. In Proceedings of the International Conference on New Interfaces for Musical Expression, pp. 192–195. Vancouver, Canada, 2005.

[3] Bongers, B. Physical interaction in the electronic arts: Interaction theory and interfacing techniques for real-time performance. In Wanderley, M. M. and Battier, M., editors, Trends in Gestural Control of Music. Ircam, Centre Pompidou, France, 2000.

[4] Wanderley, M. M., Depalle, P. Gestural control of sound synthesis. In Proceedings of the IEEE Special Issue on Engineering and Music — Supervisory Control and Auditory Communication, 92(4):632–644, 2004.

[5] Wessel, D., Wright, M. Problems and prospects for intimate musical control of computers. Computer Music Journal, 26(3):11–22, 2002.

[6] Hamman, M. From symbol to semiotic: Representation, signification, and the composition of music interaction. Journal of New Music Research, 28(2):90–104, 1999.

[7] Wanderley, M. M., Orio, N. Evaluation of input devices for musical expression: Borrowing tools from HCI. Computer Music Journal, 26(3):62–76, 2002.

[8] Cariou, B. Design of an alternative controller from an industrial design perspective. In Proceedings of the International Computer Music Conference, pp. 366–367. San Francisco, California, USA, 1992.

[9] Levitin, D., McAdams, S., Adams, R. L. Control parameters for musical instruments: a foundation for new mappings of gesture to sound. Organized. Sound, 7(2):171–189, 2002.

[10] Kvifte, T., Jensenius, A. R. Towards a coherent terminology and model of instrument description and design. In Proceedings of the International Conference on New Interfaces for Musical Expression, pp. 220–225. Paris, France, 2006.

[11] Jordà, S. Digital lutherie: Crafting musical computers for new musics’ performance and improvisation. Ph.D. dissertation, Universitat Pompeu Fabra, 2005.

[12] Rasmussen, J. Information Processing and Human-Machine Interaction: An Approach to Cognitive Engineering. New York, NY, USA: Elsevier Science Inc., 1986.

[13] Cariou, B. “The aXiO midi controller. In Proceedings of the International Computer Music Conference, pp. 163–166. Århus, Denmark, 1994.

[14] Clarke, E. F. Generative Processes in Music. Oxford: J. A. Sloboda (Ed), Clarendon Press, 1988, ch. Generative principles in music performance, pp. 1–26.

[15] Jacob, R. J. K., Sibert, L. E., McFarlane, D. C., Mullen, Jr., M. P. Integrality and separability of input devices. ACM Transactions on Human Computer Interaction, 1(1):3–26, 1994.

[16] Cage, J. Silence: Lectures and Writings. Middletown: Wesleyan University Press, 1961.

[17] Wang, G., Cook, P. On-the-fly programming: Using code as an expressive musical instrument. In Proceedings of the International Conference on New Instruments for Musical Expression, Hamamatsu, Japan, 2004, pp. 138–143.

[18] Fink, R. The story of ORCH5, or, the classical ghost in the hip-hop machine. Popular Music, 24(3):339–356, 2005.

[19] Mathews, M., Verplank, B. Scanned synthesis. In Proceedings of the International Computer Music Conference, pp. 368–371. Berlin, Germany, 2000.

[20] Sinyor, E., Wanderley, M. M. Gyrotyre: A dynamic hand-held computer-music controller based on a spinning wheel. In Proceedings of the International Conference on New Instruments for Musical Expression, pp. 42–45. Vancouver, Canada, 2005.

DAFx-06, Montreal, Canada

© 2006. Copyright remains with the author(s).