Towards a dimension space for musical devices

David M. Birnbaum, Rebecca Fiebrink, Joseph Malloch, Marcelo M. Wanderley

Input Devices and Music Interaction Laboratory

McGill University

Montreal, Canada

Abstract

While several researchers have grappled with the problem of comparing musical devices across performance, installation, and related contexts, no methodology yet exists for producing holistic, informative visualizations for these devices. Drawing on existing research in performance interaction, human-computer interaction, and design space analysis, the authors propose a dimension space representation that can be adapted for visually displaying musical devices. This paper illustrates one possible application of the dimension space to existing performance and interaction systems, revealing its usefulness both in exposing patterns across existing musical devices and aiding in the design of new ones.

Keywords

Human-Computer Interaction, Design Space Analysis, New Interfaces for Musical Expression

1. Examining musical devices

Musical devices can take varied forms, including interactive installations, digital musical instruments, and augmented instruments. Trying to make sense of this wide

variability, several researchers have proposed frameworks for classifying the various systems.

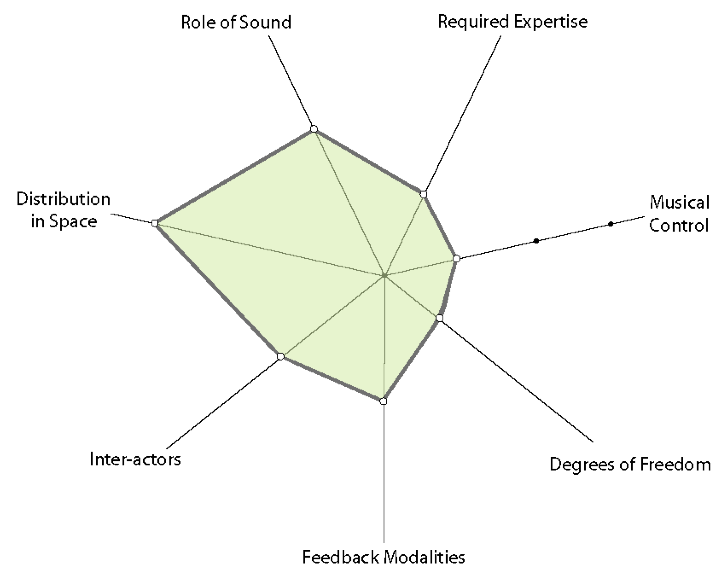

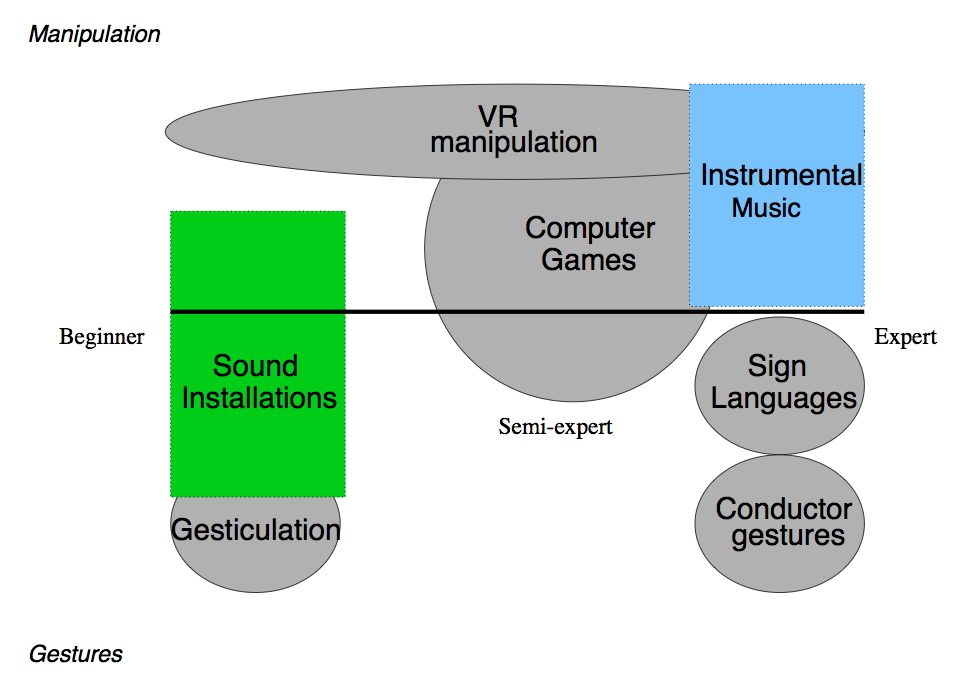

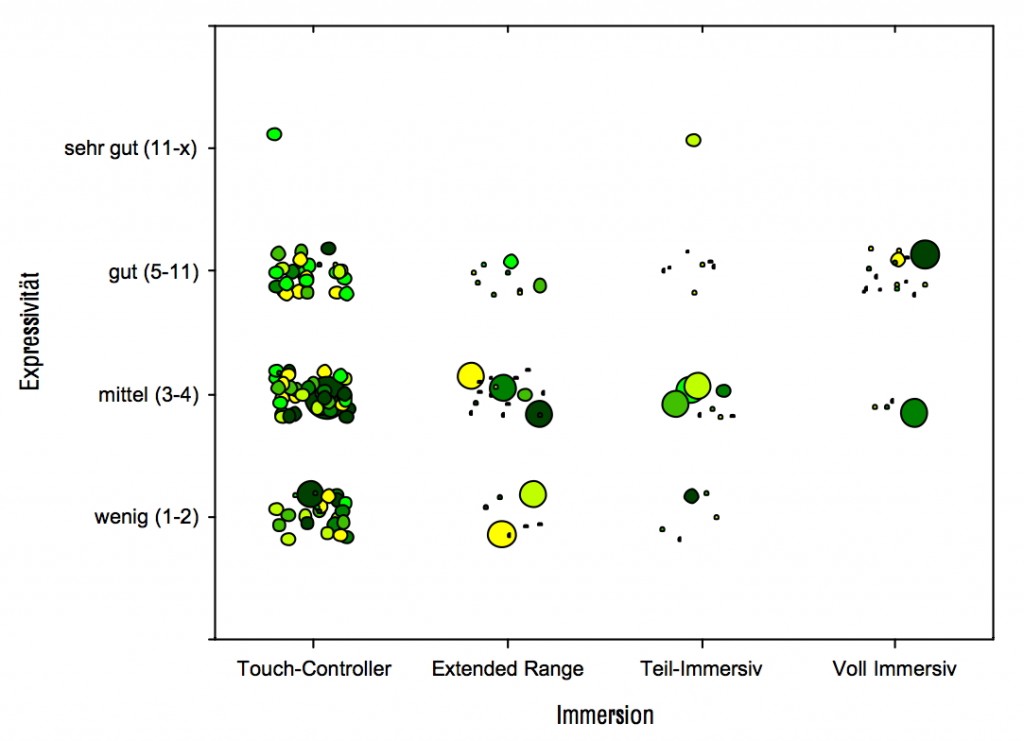

As early as 1985, Pennycook [15] offered a discussion of interface concepts and design issues. Pressing [18] proposed a set of fundamental design principles for computer-music interfaces. His exhaustive treatment of the topic laid the groundwork for further research on device characterization. Bongers [3] characterized musical interactions as belonging to one of three modes: Performer–System interaction, such as a performer playing an instrument, System–Audience interaction, such as those commonly found at interactive sound installations, and Performer–System–Audience interaction, which describes interactive systems in which both artist and audience interact in real time. Wanderley et al. [24] discussed two approaches to classification of musical devices, including instruments and installations: the technological perspective and the semantical perspective. Jordà [7] characterizes instruments in terms of music output complexity, control input complexity and performer freedom. Focusing on interactive installations, Winkler [25] discussed digital, physical, social, and personal factors that should be considered in their design. In a similar way, Blaine and Fels [1] studied design features of collaborative musical systems, with the particular goal of elucidating design issues endemic to systems for novice players. While these various approaches contribute insight to the problem of musical device classification, most did not provide a visual representation, which could facilitate device comparison and design. One exception is [24], which proposed a basic visualization employing two axes: type of user action and user expertise (Figure 1). Piringer [16] offers a more developed representation, as shown in Figure 2. However, both of these representations are limited to only a few dimensions. Furthermore, the configurations could be misread to imply orthogonality of the dimensions represented by the x- and y-axes.

|

| Figure 1: The 2-dimensional representation of Wanderley et al. [24]. |

|

| Figure 2: An example of a visual representation by Piringer [16]. “Expressivity” appears on the y-axis, with the categories very good, good, middle, and very little (top to bottom). “Immersion” appears on the x-axis, with the categories Touch-Controller, Extended-Range, Partially Immersive, and Fully Immersive, an adaptation from [11]. Each shape represents an instrument; the size indicates the amount of feedback and the color indicates feedback modality. |

The goal of this text is to illustrate an efficient, visually-oriented approach to labeling, discussing, and evaluating a broad range of musical systems. Musical contexts where these systems could be of potential interest might relate to Instrumental manipulation (e.g., [22]), Control of pre-recorded sequences of events (see [10], [2]), Control of sound diffusion in multi-channel sound environments, Interaction in the context of (interactive) multimedia installations ([25], for example), Interaction in dance-music systems [4], and Interaction in computer game systems. Systems in this diverse set involve a range of demands on the user(s) that characterize the human-system interaction, and these demands can be studied with a focus on the underlying system designs. The HCI-driven approach chosen for this study is design space analysis.

2. Design space analysis

Initially proposed as a tool for software design in [8] and [9], design space analysis offers tools for examining a system in terms of a general framework of theoretical and practical design decisions. Through formal application of ‘QOC’ analysis composed of Questions about design, Options of how to address these questions, and Criteria regarding the suitability of the available options, one generates a visual representation of the design space of a system. In effect, this representation distinguishes the design rationale behind a system from the set of all possible design decisions. MacLean [8] outlines two goals of the design space analysis approach: to “develop a technique for representing design decisions which will, even on its own, support and augment design practice,” and to “use the framework as a vehicle for communicating and contextualising more analytic approaches to usersystem [sic] interaction into the practicalities of design.”

2.1 Dimension Space Analysis

Dimension space analysis is a related approach to system design that retains the goals of supporting design practice and facilitating communication [6]. Although dimension space analysis does not explicitly incorporate the QOC method of outlining the design space of a system, it preserves the notion of a system inhabiting a finite space within the space of all possible design options, and it sets up the dimensions of this space to correspond to various object properties.

The Dimension Space outlined by Graham [6] represents interactive systems on six axes. Each system component is plotted as a separate dimension space so that the system can be examined from several points of view. Some axes represent a continuum from one extreme to another, such as the Output Capacity axis, whose values range from low to high. Others contain only a few discrete points in logical progression, such as Attention Received, which contains the points high, peripheral, and none. The Role axis is the most eccentric, containing five unordered points.

A dimension plot is generated by placing points on each axis, and connecting them to form a two-dimensional shape. They are created from the perspective of a specific entity involved in the interaction. Systems and their components can then be compared rapidly by comparing their respective plots. The shape of the individual plots, however, contain no intended meaning. The flexibility of the dimension space approach lies in the ability to redefine the axes. In adapting this method, the choice of axes and their possible values is made with respect to the range of systems being considered, and the significant features to be used to distinguish among them. Plotting a system onto a Dimension Space is an exercise that forces the designer to examine each of its characteristics individually, and it exposes important issues that may arise during the design or use of a system.

We illustrate one possible adaptation of [6]’s multi-axis graph to classify and plot musical devices ranging from digital musical instruments to sound installations. For this exercise, we chose axes that would meaningfully display design differences among devices, and plotted each device only once, rather than creating multiple plots from different perspectives.

2.2 An Example Dimension Space

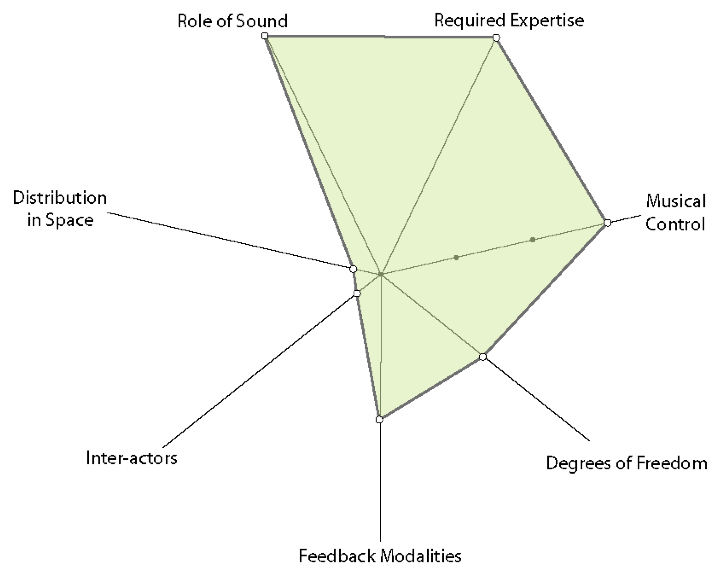

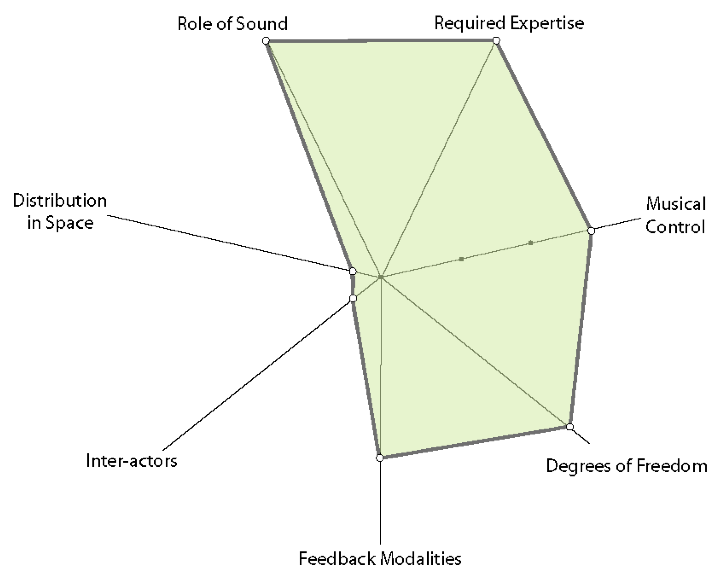

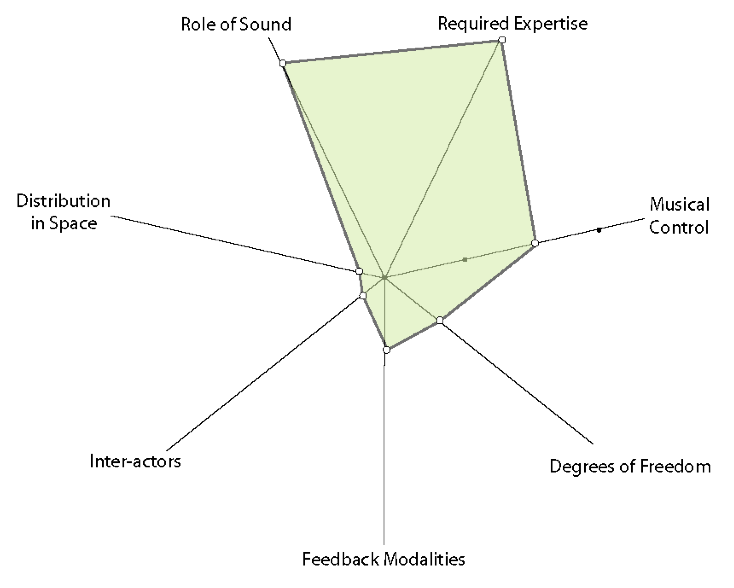

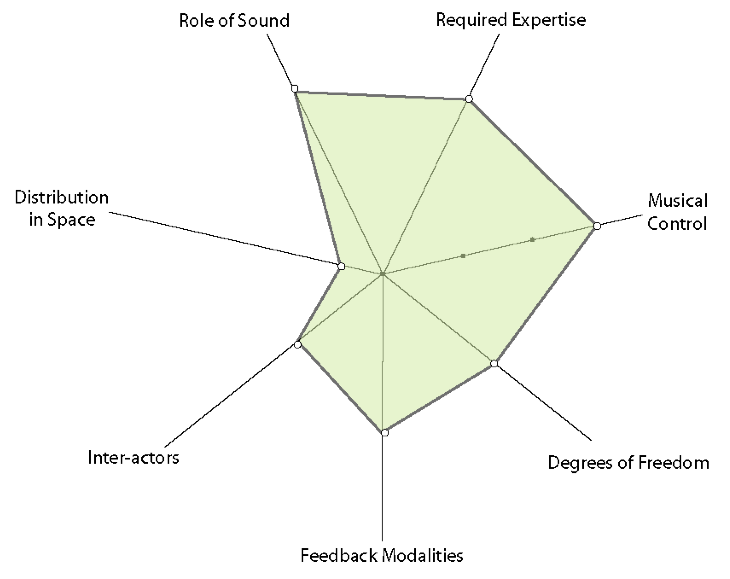

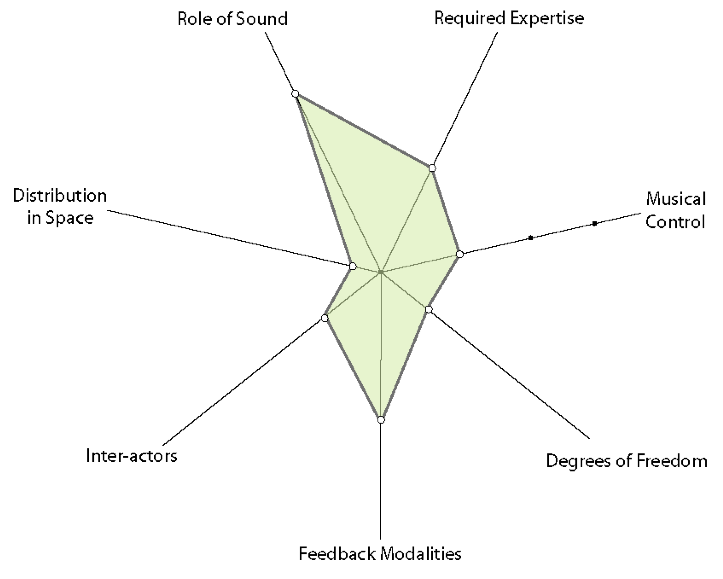

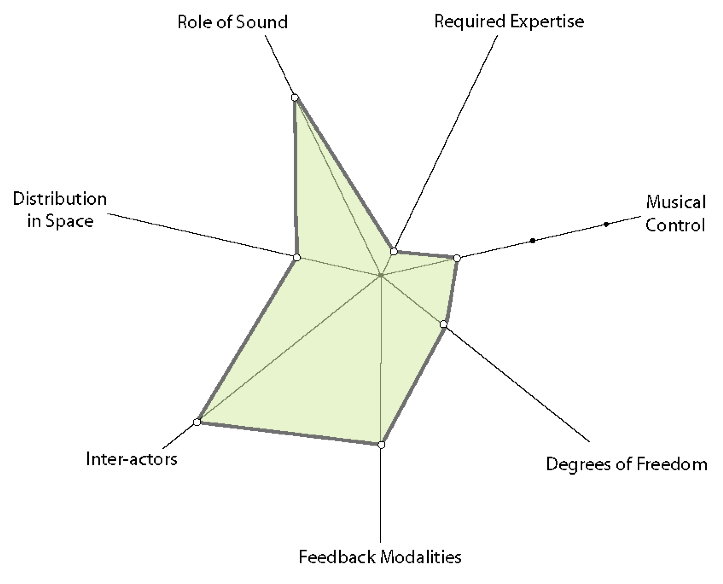

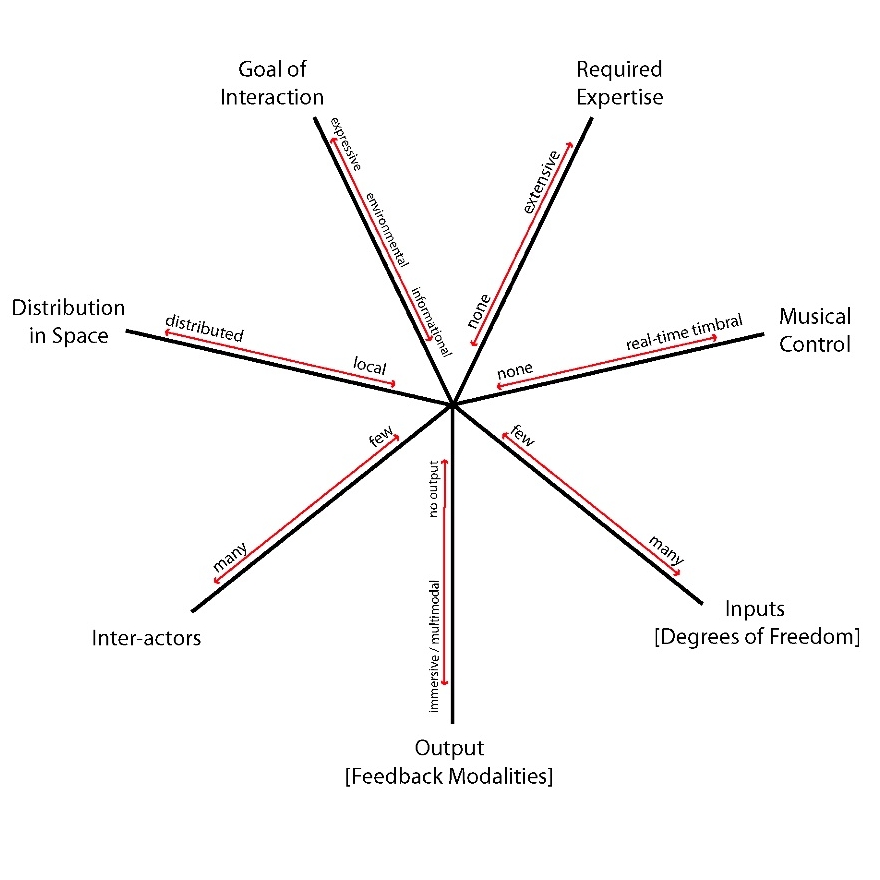

In adapting the dimension space to the analysis of musical devices, we explored several quantities and configurations of axes. It was subjectively determined that the functionality of the spaces was not affected in plots with as many as eight axes. As an example, Figure 3 shows a seven-axis configuration, labeled with representative ranges. Figure 4 shows plots of several devices, drawn from the areas of digital musical instruments and interactive installations incorporating sound and/or music. Each of the axes are described in detail in the following section.

- The Required Expertise axis represents the level of practice and familiarity with the system that a user or performer should possess in order to interact as intended with the system. It is a continuous axis ranging in value from low to high expertise.

- The Musical Control axis specifies the level of control a user exerts over the resulting musical output of the system. The axis is not continuous, rather it contains three discrete points following the characterization of [19], using three possible levels of control over musical processes: timbral level, note level, and control over a musical process.

- The Feedback Modalities axis indicates the degree to which a system provides real-time feedback to a user. Typical feedback modes include visual, auditory, tactile, and kinesthetic [24].

- The Degrees of Freedom axis indicates the number of input controls available to a user of a musical system. This axis is continuous, representing devices with few inputs at one extreme and those with many at the other extreme.

- The Inter-actors axis represents the number of people involved in the musical interaction. Typically interactions with traditional musical instruments feature only one inter-actor, but some digital musical instruments and installations are designed as collaborative interfaces (see [5], [1]), and a large installation may involve hundreds of people interacting with the system at once [21].

- The Distribution in Space axis represents the total physical area in which the interaction takes place, with values ranging from local to global distribution. Musical systems spanning several continents via the Internet, such as Global String, are highly distributed [20].

- The Role of Sound axis uses Pressing’s [17] categories of sound roles in electronic media. The axis ranges between three main possible values: artistic/expressive, environmental, and informational.

|

3. Trends in dimension plots

The plots of Michel Waisvisz’ The Hands (Figure 4(a)) and Todd Winkler’s installation Maybe… 1910 (Figure 4(h)) provide contrasting examples of the dimension space in use. The Hands requires a high amount of user expertise, allows timbral control of sound (depending on the mapping used), and has a moderate number of inputs and outputs. The number of inter-actors is low (one), the distribution in space is local, and the role of the produced sound is expressive. The installation Maybe… 1910, is very different: the required expertise and number of inputs are low, and only control of high-level musical processes (playback of sound files) is possible. The number of output modes is quite high (sights, sounds, textures, smells) as is the number of inter-actors. The distribution in space of the interaction, while still local, is larger than most instruments, and the role of sound is primarily the exploration of the installation environment.

|

||||||||||||||||||||||||

When comparing these plots, and those of other music devices, it became apparent that the grouping used caused the plots of instruments to shift to the right side of the graph, and plots of installations to shift to the left. Installations commonly involve more people at the point of interaction, with the expectation that they are non-experts. Also, installations are often more distributed in space than instruments, which are intended to offer more control and a high potential for expressivity, achieved by offering more degrees of freedom. Sequencing tools, games, and toys typically occupy a smaller but still significant portion of the right side of the graph.

4. Conclusions

We have demonstrated that a dimension space paradigm allows visual representation of digital musical instruments, sound installations, and other variants of music devices. These dimension spaces are useful for clarifying the process of device development, as each relevant characteristic is defined and isolated. Furthermore, we found that the seven-axis dimension space resulted in visible trends between plots of related devices, with instrument-like devices tending to form one distinct shape and installations forming another shape. These trends can be used to present a geometric formulation of the relationships among existing systems, of benefit to device characterization and design.

Our future work in this direction might include further refinement of the system of axes, including changing the number of axes, or their definitions. Furthermore, a major problem remains insofar as the current plots are based partly on a subjective assessment of the devices. This assessment should be verified with empirical measurements from user tests [23]. Others who wish to employ dimension space analysis can adapt or change the axes as needed, though in the future a standard set of axes more universal in appeal may emerge.

5. References

[1] Blaine, T., Fels, S. Contexts of collaborative musical experiences. In Proceedings of the International Conference on New Instruments for Musical Expression, pp. 129–134. Montreal, Canada, 2002.

Full text (pdf, 284 KB)

[2] Boie, R., Mathews, M. The radio drum as a synthesizer controller. In Proceedings of the International Computer Music Conference, 1989.

[3] Bongers, B. Physical interaction in the electronic arts: Interaction theory and interfacing techniques for real-time performance. In Wanderley, M. M. and Battier, M., editors, Trends in Gestural Control of Music. Ircam, Centre Pompidou, France, 2000.

Full text (pdf, 6.0 MB)

[4] Camurri, A. Interactive dance/music systems. In Proceedings of the International Computer Music Conference, pp. 245–252, 1995.

Full text (pdf, 736 KB)

[5] Fels, S., Vogt, F. Tooka: Explorations of two person instruments. In Proceedings of the International Conference on New Instruments for Musical Expression, pp. 129–134. Dublin, Ireland, 2003.

Full text (pdf, 476 KB)

[6] Graham, T. C. Nicholas, Watts, Leon A., Calvary, Gaëlle, Coutaz, Joëlle, Dubois, Emmanuel, Nigay, Laurence. A dimension space for the design of interactive systems within their physical environments. In Proceedings of the Conference on Designing Interactive Systems, pp. 406–416. ACM Press, 2000.

Full text (pdf, 173 KB)

[7] Jordà, S. FMOL: Toward user-friendly, sophisticated new musical instruments. Computer Music Journal, 26(3):23–39, 2002

Full text (pdf, 208 KB)

[8] MacLean, A., Bellotti, V., Shum, S. Developing the design space with design space analysis. In P. F. Byerley, P. J. Barnard, and J. May, editors, Computers, Communication and Usability: design issues, research and methods for integrated services, pp. 197–219. North Holland Series in Telecommunication, Elsevier, Amsterdam, 1993.

Full text (pdf, 194 KB)

[9] MacLean, A., McKerlie, D. Design space analysis and use-representations. Technical Report EPC-1995-102, Rank Xerox Research Centre, Cambridge, September 1994.

Full text (pdf, 223 KB)

[10] Mathews, M. The conductor program and mechanical baton. In M. Mathews and J. Pierce, editors, Current directions in computer music research, pp. 263–281. MIT Press, Cambridge, Massachusetts, 1989.

Full text (pdf, 2.1 MB)

[11] Mulder, A. Design of virtual three-dimensional instruments for sound control. PhD thesis, Simon Fraser University, 1998.

Full text (pdf, 1.0 MB)

[12] Newton-Dunn, H., Nakano, H., Gibson, J. Block Jam: A tangible interface for interactive music. In Proceedings of the International Conference on New Instruments for Musical Expression, pp. 170–177. Montreal, Canada, 2003.

Full text (pdf, 653 KB)

[13] Palacio-Quintin, C. The Hyper-Flute. In Proceedings of the International Conference on New Instruments for Musical Expression, pp. 206–207. Montreal, Canada, 2003.

Full text (pdf, 174 KB)

[14] Paradiso, J. The Brain Opera technology: New instruments and gestural sensors for musical interaction and performance. Journal of New Music Research, 28(2):130–149, 1999.

Full text (pdf, 1.2 MB)

[15] Pennycook, B. W. Computer-music interfaces: A survey. Computing Surveys, 17(2):267–289, 1999.

Full text (pdf, 1.9 MB)

[16] Piringer, J. Elektronische Musik und Interaktivität: Prinzipien, Konzepte, Anwendungen. PhD thesis, Institut für Gestaltungs – und Wirkungsforschung der Technischen Universität Wien, October 2001.

Full text (pdf, 3.9 MB)

[17] Pressing, J. Some perspectives on performed sound and music in virtual environments. Presence, 6:1–22, 1997.

[18] Pressing, J. Cybernetic issues in interactive performance systems. Computer Music Journal, 14(1):12–25, 1999.

[19] Andrew Schloss, W. Recent advances in the coupling of the language Max with the Mathews/Boie Radio Drum. In Proceedings of the International Computer Music Conference, pp. 398–400, 1990.

Full text (pdf, 223 KB)

[20] Tanaka, A., Bongers, B. Global string: A musical instrument for hybrid space. In M. Fleischmann and W. Strauss, editors, Proceedings: cast01 // Living in Mixed Realities, pp. 177–181. MARS Exploratory Media Lab, FhG – Institut Medienkommunikation, 2001.

Full text (pdf, 937 KB)

[21] Ulyate, R., Bianciardi, D. The interactive dance club: Avoiding chaos in a multi participant environment. In Proceedings of the International Conference on New Interfaces for Musical Expression, Seattle, Washington, 2001.

Full text (pdf, 41 KB)

[22] Waisvisz, M. The Hands, a set of remote MIDI-controllers. In Proceedings of the International Computer Music Conference, pp. 313–318, 1985.

Full text (pdf, 727 KB)

[23] Wanderley, M. M., Orio, N. Evaluation of input devices for musical expression: Borrowing tools from HCI. Computer Music Journal, 26(3):62–76, 2002.

Full text (pdf, 115 KB)

[24] Wanderley, M. M., Orio, N., Schnell, N. Towards an analysis of interaction in sound generating systems. In ISEA2000 Conference Proceedings, December 2000.

Full text (pdf, 270 KB)

[25] Winkler, T. Audience participation and response in movement-sensing installations. In Proceedings of the International Computer Music Conference, December 2000.

Full text (pdf, 38 KB)

NIME-05, Vancouver, Canada

© 2005. Copyright remains with the author(s).